YOLO V4出来也几天了,论文大致看了下,然后看到大量的优秀者实现了各个版本的YOLOV4了。

Yolo v4 论文: https://arxiv.org/abs/2004.10934

AB大神Darknet版本的源码实现: https://github.com/AlexeyAB/darknet

本文针对Pytorch版本实现的YOLOV4进行分析,感谢Tianxiaomo 分享的工程:Pytorch-YoloV4

作者分享的权重文件,下载地址:

- baidu(https://pan.baidu.com/s/1dAGEW8cm-dqK14TbhhVetA Extraction code:dm5b)

- google(https://drive.google.com/open?id=1cewMfusmPjYWbrnuJRuKhPMwRe_b9PaT)

该权重文件yolov4.weights 是在coco数据集上训练的,目标类有80种,当前工程支持推理,不包括训练~

我的测试环境是anaconda配置的环境,pytorch1.0.1, torchvision 0.2.1;

工程目录如下:

终端运行指令:

# 指令需要传入cfg文件路径,权重文件路径,图像路径

>>python demo.py cfg/yolov4.cfg yolov4.weights data/dog.jpg运行结果会生成一张检测后的图:predictions.jpg

接下来对源码做分析:

其中demo.py中,主要调用了函数detect(),其代码如下:

def detect(cfgfile, weightfile, imgfile):

m = Darknet(cfgfile) #穿件Darknet模型对象m

m.print_network() # 打印网络结构

m.load_weights(weightfile) #加载权重值

print('Loading weights from %s... Done!' % (weightfile))

num_classes = 80

if num_classes == 20:

namesfile = 'data/voc.names'

elif num_classes == 80:

namesfile = 'data/coco.names'

else:

namesfile = 'data/names'

use_cuda = 0 # 是否使用cuda,工程使用的是cpu执行

if use_cuda:

m.cuda() # 如果使用cuda则将模型对象拷贝至显存,默认GUP ID为0;

img = Image.open(imgfile).convert('RGB') # PIL打开图像

sized = img.resize((m.width, m.height))

for i in range(2):

start = time.time()

boxes = do_detect(m, sized, 0.5, 0.4, use_cuda) # 做检测,返回的boxes是昨晚nms后的检测框;

finish = time.time()

if i == 1:

print('%s: Predicted in %f seconds.' % (imgfile, (finish - start)))

class_names = load_class_names(namesfile) # 加载类别名

plot_boxes(img, boxes, 'predictions.jpg', class_names)# 画框,并输出检测结果图像文件;在创建Darknet()对象过程中,会根据传入的cfg文件做初始化工作,主要是cfg文件的解析,提取cfg中的每个block;网络结构的构建;(如下图)

现在先说下根据cfg文件是如何解析网络结果吧,主要调用了tool/cfg.py的parse_cfg()函数,它会返回blocks,网络结果是长这个样子的(使用Netron网络查看工具 打开cfg文件,完整版请自行尝试):

创建网络模型是调用了darknet2pytorch.py中的create_network()函数,它会根据解析cfg得到的blocks构建网络,先创建个ModuleList模型列表,为每个block创建个Sequential(),将每个block中的卷积操作,BN操作,激活操作都放到这个Sequential()中;可以理解为每个block对应一个Sequential();

构建好的的ModuleList模型列表大致结构如下:

Darknet(

(models): ModuleList(

(0): Sequential(

(conv1): Conv2d(3, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish1): Mish()

)

(1): Sequential(

(conv2): Conv2d(32, 64, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish2): Mish()

)

(2): Sequential(

(conv3): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish3): Mish()

)

(3): EmptyModule()

(4): Sequential(

(conv4): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn4): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish4): Mish()

)

(5): Sequential(

(conv5): Conv2d(64, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn5): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish5): Mish()

)

(6): Sequential(

(conv6): Conv2d(32, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn6): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish6): Mish()

)

(7): EmptyModule()

(8): Sequential(

(conv7): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn7): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish7): Mish()

)

(9): EmptyModule()

(10): Sequential(

(conv8): Conv2d(128, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn8): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish8): Mish()

)

(11): Sequential(

(conv9): Conv2d(64, 128, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn9): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish9): Mish()

)

(12): Sequential(

(conv10): Conv2d(128, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn10): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish10): Mish()

)

(13): EmptyModule()

(14): Sequential(

(conv11): Conv2d(128, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn11): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish11): Mish()

)

(15): Sequential(

(conv12): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn12): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish12): Mish()

)

(16): Sequential(

(conv13): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn13): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish13): Mish()

)

(17): EmptyModule()

(18): Sequential(

(conv14): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn14): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish14): Mish()

)

(19): Sequential(

(conv15): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn15): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish15): Mish()

)

(20): EmptyModule()

(21): Sequential(

(conv16): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn16): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish16): Mish()

)

(22): EmptyModule()

(23): Sequential(

(conv17): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn17): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish17): Mish()

)

(24): Sequential(

(conv18): Conv2d(128, 256, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn18): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish18): Mish()

)

(25): Sequential(

(conv19): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn19): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish19): Mish()

)

(26): EmptyModule()

(27): Sequential(

(conv20): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn20): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish20): Mish()

)

(28): Sequential(

(conv21): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn21): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish21): Mish()

)

(29): Sequential(

(conv22): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn22): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish22): Mish()

)

(30): EmptyModule()

(31): Sequential(

(conv23): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn23): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish23): Mish()

)

(32): Sequential(

(conv24): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn24): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish24): Mish()

)

(33): EmptyModule()

(34): Sequential(

(conv25): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn25): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish25): Mish()

)

(35): Sequential(

(conv26): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn26): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish26): Mish()

)

(36): EmptyModule()

(37): Sequential(

(conv27): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn27): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish27): Mish()

)

(38): Sequential(

(conv28): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn28): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish28): Mish()

)

(39): EmptyModule()

(40): Sequential(

(conv29): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn29): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish29): Mish()

)

(41): Sequential(

(conv30): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn30): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish30): Mish()

)

(42): EmptyModule()

(43): Sequential(

(conv31): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn31): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish31): Mish()

)

(44): Sequential(

(conv32): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn32): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish32): Mish()

)

(45): EmptyModule()

(46): Sequential(

(conv33): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn33): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish33): Mish()

)

(47): Sequential(

(conv34): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn34): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish34): Mish()

)

(48): EmptyModule()

(49): Sequential(

(conv35): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn35): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish35): Mish()

)

(50): Sequential(

(conv36): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn36): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish36): Mish()

)

(51): EmptyModule()

(52): Sequential(

(conv37): Conv2d(128, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn37): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish37): Mish()

)

(53): EmptyModule()

(54): Sequential(

(conv38): Conv2d(256, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn38): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish38): Mish()

)

(55): Sequential(

(conv39): Conv2d(256, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn39): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish39): Mish()

)

(56): Sequential(

(conv40): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn40): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish40): Mish()

)

(57): EmptyModule()

(58): Sequential(

(conv41): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn41): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish41): Mish()

)

(59): Sequential(

(conv42): Conv2d(256, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn42): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish42): Mish()

)

(60): Sequential(

(conv43): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn43): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish43): Mish()

)

(61): EmptyModule()

(62): Sequential(

(conv44): Conv2d(256, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn44): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish44): Mish()

)

(63): Sequential(

(conv45): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn45): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish45): Mish()

)

(64): EmptyModule()

(65): Sequential(

(conv46): Conv2d(256, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn46): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish46): Mish()

)

(66): Sequential(

(conv47): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn47): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish47): Mish()

)

(67): EmptyModule()

(68): Sequential(

(conv48): Conv2d(256, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn48): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish48): Mish()

)

(69): Sequential(

(conv49): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn49): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish49): Mish()

)

(70): EmptyModule()

(71): Sequential(

(conv50): Conv2d(256, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn50): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish50): Mish()

)

(72): Sequential(

(conv51): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn51): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish51): Mish()

)

(73): EmptyModule()

(74): Sequential(

(conv52): Conv2d(256, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn52): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish52): Mish()

)

(75): Sequential(

(conv53): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn53): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish53): Mish()

)

(76): EmptyModule()

(77): Sequential(

(conv54): Conv2d(256, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn54): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish54): Mish()

)

(78): Sequential(

(conv55): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn55): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish55): Mish()

)

(79): EmptyModule()

(80): Sequential(

(conv56): Conv2d(256, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn56): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish56): Mish()

)

(81): Sequential(

(conv57): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn57): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish57): Mish()

)

(82): EmptyModule()

(83): Sequential(

(conv58): Conv2d(256, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn58): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish58): Mish()

)

(84): EmptyModule()

(85): Sequential(

(conv59): Conv2d(512, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn59): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish59): Mish()

)

(86): Sequential(

(conv60): Conv2d(512, 1024, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn60): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish60): Mish()

)

(87): Sequential(

(conv61): Conv2d(1024, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn61): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish61): Mish()

)

(88): EmptyModule()

(89): Sequential(

(conv62): Conv2d(1024, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn62): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish62): Mish()

)

(90): Sequential(

(conv63): Conv2d(512, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn63): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish63): Mish()

)

(91): Sequential(

(conv64): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn64): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish64): Mish()

)

(92): EmptyModule()

(93): Sequential(

(conv65): Conv2d(512, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn65): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish65): Mish()

)

(94): Sequential(

(conv66): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn66): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish66): Mish()

)

(95): EmptyModule()

(96): Sequential(

(conv67): Conv2d(512, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn67): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish67): Mish()

)

(97): Sequential(

(conv68): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn68): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish68): Mish()

)

(98): EmptyModule()

(99): Sequential(

(conv69): Conv2d(512, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn69): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish69): Mish()

)

(100): Sequential(

(conv70): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn70): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish70): Mish()

)

(101): EmptyModule()

(102): Sequential(

(conv71): Conv2d(512, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn71): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish71): Mish()

)

(103): EmptyModule()

(104): Sequential(

(conv72): Conv2d(1024, 1024, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn72): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(mish72): Mish()

)

(105): Sequential(

(conv73): Conv2d(1024, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn73): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky73): LeakyReLU(negative_slope=0.1, inplace)

)

(106): Sequential(

(conv74): Conv2d(512, 1024, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn74): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky74): LeakyReLU(negative_slope=0.1, inplace)

)

(107): Sequential(

(conv75): Conv2d(1024, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn75): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky75): LeakyReLU(negative_slope=0.1, inplace)

)

(108): MaxPoolStride1()

(109): EmptyModule()

(110): MaxPoolStride1()

(111): EmptyModule()

(112): MaxPoolStride1()

(113): EmptyModule()

(114): Sequential(

(conv76): Conv2d(2048, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn76): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky76): LeakyReLU(negative_slope=0.1, inplace)

)

(115): Sequential(

(conv77): Conv2d(512, 1024, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn77): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky77): LeakyReLU(negative_slope=0.1, inplace)

)

(116): Sequential(

(conv78): Conv2d(1024, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn78): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky78): LeakyReLU(negative_slope=0.1, inplace)

)

(117): Sequential(

(conv79): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn79): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky79): LeakyReLU(negative_slope=0.1, inplace)

)

(118): Upsample()

(119): EmptyModule()

(120): Sequential(

(conv80): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn80): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky80): LeakyReLU(negative_slope=0.1, inplace)

)

(121): EmptyModule()

(122): Sequential(

(conv81): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn81): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky81): LeakyReLU(negative_slope=0.1, inplace)

)

(123): Sequential(

(conv82): Conv2d(256, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn82): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky82): LeakyReLU(negative_slope=0.1, inplace)

)

(124): Sequential(

(conv83): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn83): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky83): LeakyReLU(negative_slope=0.1, inplace)

)

(125): Sequential(

(conv84): Conv2d(256, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn84): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky84): LeakyReLU(negative_slope=0.1, inplace)

)

(126): Sequential(

(conv85): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn85): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky85): LeakyReLU(negative_slope=0.1, inplace)

)

(127): Sequential(

(conv86): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn86): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky86): LeakyReLU(negative_slope=0.1, inplace)

)

(128): Upsample()

(129): EmptyModule()

(130): Sequential(

(conv87): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn87): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky87): LeakyReLU(negative_slope=0.1, inplace)

)

(131): EmptyModule()

(132): Sequential(

(conv88): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn88): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky88): LeakyReLU(negative_slope=0.1, inplace)

)

(133): Sequential(

(conv89): Conv2d(128, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn89): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky89): LeakyReLU(negative_slope=0.1, inplace)

)

(134): Sequential(

(conv90): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn90): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky90): LeakyReLU(negative_slope=0.1, inplace)

)

(135): Sequential(

(conv91): Conv2d(128, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn91): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky91): LeakyReLU(negative_slope=0.1, inplace)

)

(136): Sequential(

(conv92): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn92): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky92): LeakyReLU(negative_slope=0.1, inplace)

)

(137): Sequential(

(conv93): Conv2d(128, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn93): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky93): LeakyReLU(negative_slope=0.1, inplace)

)

(138): Sequential(

(conv94): Conv2d(256, 255, kernel_size=(1, 1), stride=(1, 1))

)

(139): YoloLayer()

(140): EmptyModule()

(141): Sequential(

(conv95): Conv2d(128, 256, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn95): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky95): LeakyReLU(negative_slope=0.1, inplace)

)

(142): EmptyModule()

(143): Sequential(

(conv96): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn96): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky96): LeakyReLU(negative_slope=0.1, inplace)

)

(144): Sequential(

(conv97): Conv2d(256, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn97): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky97): LeakyReLU(negative_slope=0.1, inplace)

)

(145): Sequential(

(conv98): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn98): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky98): LeakyReLU(negative_slope=0.1, inplace)

)

(146): Sequential(

(conv99): Conv2d(256, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn99): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky99): LeakyReLU(negative_slope=0.1, inplace)

)

(147): Sequential(

(conv100): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn100): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky100): LeakyReLU(negative_slope=0.1, inplace)

)

(148): Sequential(

(conv101): Conv2d(256, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn101): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky101): LeakyReLU(negative_slope=0.1, inplace)

)

(149): Sequential(

(conv102): Conv2d(512, 255, kernel_size=(1, 1), stride=(1, 1))

)

(150): YoloLayer()

(151): EmptyModule()

(152): Sequential(

(conv103): Conv2d(256, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn103): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky103): LeakyReLU(negative_slope=0.1, inplace)

)

(153): EmptyModule()

(154): Sequential(

(conv104): Conv2d(1024, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn104): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky104): LeakyReLU(negative_slope=0.1, inplace)

)

(155): Sequential(

(conv105): Conv2d(512, 1024, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn105): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky105): LeakyReLU(negative_slope=0.1, inplace)

)

(156): Sequential(

(conv106): Conv2d(1024, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn106): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky106): LeakyReLU(negative_slope=0.1, inplace)

)

(157): Sequential(

(conv107): Conv2d(512, 1024, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn107): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky107): LeakyReLU(negative_slope=0.1, inplace)

)

(158): Sequential(

(conv108): Conv2d(1024, 512, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn108): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky108): LeakyReLU(negative_slope=0.1, inplace)

)

(159): Sequential(

(conv109): Conv2d(512, 1024, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn109): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(leaky109): LeakyReLU(negative_slope=0.1, inplace)

)

(160): Sequential(

(conv110): Conv2d(1024, 255, kernel_size=(1, 1), stride=(1, 1))

)

(161): YoloLayer()

)

)返回demo.py 的detect()函数,构件好Darknet对象后,打印网络结构图,然后调用darknet2pytorch.py中的load_weights()加载权重文件,这里介绍下这个权重文件中的数值分别是什么以及怎么排序的。

对于没有bias的模型数据,从yolov4.weights加载的模型数据,其数值排列顺序为先是BN的bias(gamma),然后是BN的weight(alpha)值,然后是BN的mean,然后是BN的var, 最后是卷积操作的权重值,如下图,buf是加载后的yolov4.weights数据内容;网络第一个卷积核个数为32个,其对应的BN2操作的bias也有32个,而卷积核参数为3x3x3x32 =864 (含义分别是输入通道是3,因为图像是三通道的,3x3的卷积核大小,然后输出核个数是32个);

而如下几个block类型在训练过程中是不会生成权重值的,所以不用从yolov4.weights中取值;

elif block['type'] == 'maxpool':

pass

elif block['type'] == 'reorg':

pass

elif block['type'] == 'upsample':

pass

elif block['type'] == 'route':

pass

elif block['type'] == 'shortcut':

pass

elif block['type'] == 'region':

pass

elif block['type'] == 'yolo':

pass

elif block['type'] == 'avgpool':

pass

elif block['type'] == 'softmax':

pass

elif block['type'] == 'cost':

pass

完成cfg文件的解析,模型的创建与权重文件的加载之后,现在要做的就是执行检测操作了,主要调用了utils/utils.py中的do_detect()函数,在demo.py中就是这行代码:boxes = do_detect(m, sized, 0.5, 0.4, use_cuda)

def do_detect(model, img, conf_thresh, nms_thresh, use_cuda=1):

model.eval() #模型做推理

t0 = time.time()

if isinstance(img, Image.Image):

width = img.width

height = img.height

img = torch.ByteTensor(torch.ByteStorage.from_buffer(img.tobytes()))

img = img.view(height, width, 3).transpose(0, 1).transpose(0, 2).contiguous() # CxHxW

img = img.view(1, 3, height, width) # 对图像维度做变换,BxCxHxW

img = img.float().div(255.0) # [0-255] --> [0-1]

elif type(img) == np.ndarray and len(img.shape) == 3: # cv2 image

img = torch.from_numpy(img.transpose(2, 0, 1)).float().div(255.0).unsqueeze(0)

elif type(img) == np.ndarray and len(img.shape) == 4:

img = torch.from_numpy(img.transpose(0, 3, 1, 2)).float().div(255.0)

else:

print("unknow image type")

exit(-1)

if use_cuda:

img = img.cuda()

img = torch.autograd.Variable(img)

list_boxes = model(img) # 主要是调用了模型的forward操作,返回三个yolo层的输出

anchors = [12, 16, 19, 36, 40, 28, 36, 75, 76, 55, 72, 146, 142, 110, 192, 243, 459, 401]

num_anchors = 9 # 3个yolo层共9种锚点

anchor_masks = [[0, 1, 2], [3, 4, 5], [6, 7, 8]]

strides = [8, 16, 32] # 每个yolo层相对输入图像尺寸的减少倍数分别为8,16,32

anchor_step = len(anchors) // num_anchors

boxes = []

for i in range(3):

masked_anchors = []

for m in anchor_masks[i]:

masked_anchors += anchors[m * anchor_step:(m + 1) * anchor_step]

masked_anchors = [anchor / strides[i] for anchor in masked_anchors]

boxes.append(get_region_boxes1(list_boxes[i].data.numpy(), 0.6, 80, masked_anchors, len(anchor_masks[i])))

# boxes.append(get_region_boxes(list_boxes[i], 0.6, 80, masked_anchors, len(anchor_masks[i])))

if img.shape[0] > 1:

bboxs_for_imgs = [

boxes[0][index] + boxes[1][index] + boxes[2][index]

for index in range(img.shape[0])]

# 分别对每一张图像做nms

boxes = [nms(bboxs, nms_thresh) for bboxs in bboxs_for_imgs]

else:

boxes = boxes[0][0] + boxes[1][0] + boxes[2][0]

boxes = nms(boxes, nms_thresh)

return boxes # 返回nms后的boxes模型forward后输出结果存在list_boxes中,因为有3个yolo输出层,所以这个列表list_boxes中又分为3个子列表;

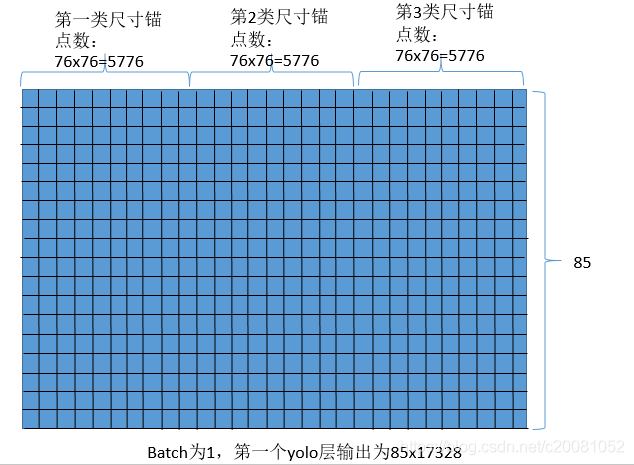

其中list_boxes[0]中存放的是第一个yolo层输出,其特征图大小对于原图缩放尺寸为8,即strides[0], 对于608x608图像来说,该层的featuremap尺寸为608/8=76;则该层的yolo输出数据维度为[batch, (classnum+4+1)*num_anchors, feature_h, feature_w] , 对于80类的coco来说,测试图像为1,每个yolo层每个特征图像点有3个锚点,该yolo层输出是[1,255,76,76];对应锚点大小为[1.5,2.0,2.375,4.5,5.0,3.5]; (这6个数分别是3个锚点的w和h,按照w1,h1,w2,h2,w3,h3排列);

同理第二个yolo层检测结果维度为[1,255,38,38],对应锚点大小为:[2.25,4.6875,4.75,3.4375,4.5,9.125],输出为 [1,255,38,38]

第三个yolo层检测维度为[1,255,19,19],对应锚点大小为:[4.4375,3.4375,6.0,7.59375,14.34375,12.53125],输出为 [1,255,19,19];

do_detect()函数中主要是调用了get_region_boxes1(output, conf_thresh, num_classes, anchors, num_anchors, only_objectness=1, validation=False) 这个函数对forward后的output做解析并做nms操作;

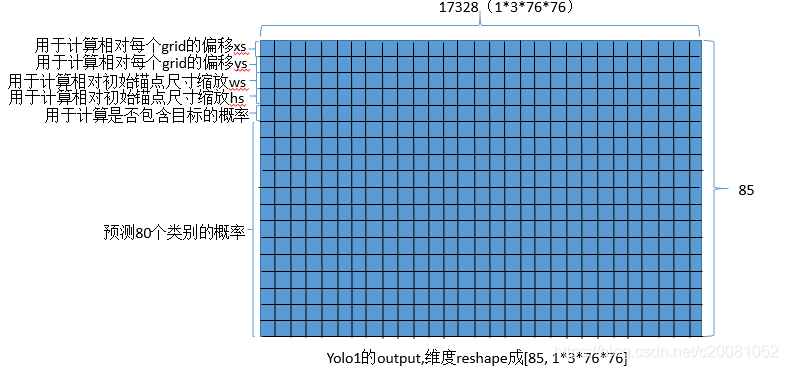

每个yolo层输出数据分析,对于第一个yolo层,输出维度为[1,85*3,76,76 ]; 会将其reshape为[85, 1*3*76*76],即有1*3*76*76个锚点在预测,每个锚点预测信息有80个类别的概率和4个位置信息和1个是否包含目标的置信度;下图是第一个yolo输出层的数据(实际绘制网格数量不正确,此处只是做说明用)

每个输出的对应代码实现为:

继续结合上面的图,分析对于某一个yolo层输出的数据是怎么排列的,其示意图如下:

如果置信度满足阈值要求,则将预测的box保存到列表(其中id是所有output的索引,其值在0~batch*anchor_num*h*w范围内)

if conf > conf_thresh:

bcx = xs[ind]

bcy = ys[ind]

bw = ws[ind]

bh = hs[ind]

cls_max_conf = cls_max_confs[ind]

cls_max_id = cls_max_ids[ind]

box = [bcx / w, bcy / h, bw / w, bh / h, det_conf, cls_max_conf, cls_max_id]对于3个yolo层先是简单的对每个yolo层输出中是否含有目标做了过滤(含有目标的概率大于阈值);然后就是对三个过滤后的框合并到一个list中作NMS操作了;涉及的代码如下:

def nms(boxes, nms_thresh):

if len(boxes) == 0:

return boxes

det_confs = torch.zeros(len(boxes))

for i in range(len(boxes)):

det_confs[i] = 1 - boxes[i][4]

_, sortIds = torch.sort(det_confs) # sort是按照从小到大排序,那么sortlds中是按照有目标的概率由大到小排序

out_boxes = []

for i in range(len(boxes)):

box_i = boxes[sortIds[i]]

if box_i[4] > 0:

out_boxes.append(box_i) # 取出有目标的概率最大的box放入out_boxes中;

for j in range(i + 1, len(boxes)): #然后将剩下的box_j都和这个box_i进行IOU计算,若与box_i重叠率大于阈值,则将box_j的包含目标概率值置为0(即不选它)

box_j = boxes[sortIds[j]]

if bbox_iou(box_i, box_j, x1y1x2y2=False) > nms_thresh:

# print(box_i, box_j, bbox_iou(box_i, box_j, x1y1x2y2=False))

box_j[4] = 0

return out_boxes补充:

论文中提到的mish激活函数:

公式是这样的(其中x是输入)

![]()

对应的图是:

##Pytorch中的代码实现为:

class Mish(torch.nn.Module):

def __init__(self):

super().__init__()

def forward(self, x):

x = x * (torch.tanh(torch.nn.functional.softplus(x)))

return x

#--------------------------------------------------------------#

Tensorflow的代码实现为:

import tensorflow as tf

from tensorflow.keras.layers import Activation

from tensorflow.keras.utils import get_custom_objects

class Mish(Activation):

def __init__(self, activation, **kwargs):

super(Mish, self).__init__(activation, **kwargs)

self.__name__ = 'Mish'

def mish(inputs):

return inputs * tf.math.tanh(tf.math.softplus(inputs))

get_custom_objects().update({'Mish': Mish(mish)})

#使用方法

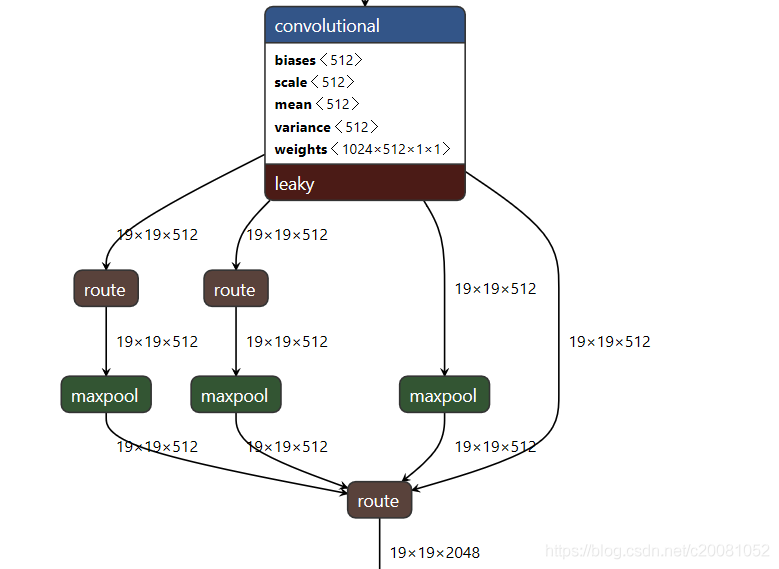

x = Activation('Mish')(x)文中提到的SPP结构大致是:

Pytorch指定运行的GPUID号的方法,https://www.cnblogs.com/jfdwd/p/11434332.html

评论(0)

您还未登录,请登录后发表或查看评论