7 视觉里程计 1

理论部分

理论部分

复制

视觉里程计

视觉里程计

复制

视觉里程计

视觉里程计

1.概念:什么是里程计?

在里程计问题中,我们希望测量一个运动物体的轨迹。这可以通过许多不同的手段来实现。例如,我们在汽车轮胎上安装计数码盘,就可以得到轮胎转动的距离,从而得到汽车的估计。或者,也可以测量汽车的速度、加速度,通过时间积分来计算它的位移。完成这种运动估计的装置(包括硬件和算法)叫做里程计(Odometry)。

2.特性:里程计的特性?

里程计一个很重要的特性,是它只关心局部时间上的运动,多数时候是指两个时刻间的运动。当我们以某种间隔对时间进行采样时,就可估计运动物体在各时间间隔之内的运动。由于这个估计受噪声影响,先前时刻的估计误差,会累加到后面时间的运动之上,这种现象称为漂移(Drift)。

3.概念:什么是视觉里程计?

视觉里程计VO的目标是根据拍摄的图像估计相机的运动。它的主要方式分为特征点法和直接方法。其中,特征点方法目前占据主流,能够在噪声较大、相机运动较快时工作,但地图则是稀疏特征点;直接方法不需要提特征,能够建立稠密地图,但存在着计算量大、鲁棒性不好的缺陷。

1.概念:什么是里程计? 在里程计问题中,我们希望测量一个运动物体的轨迹。这可以通过许多不同的手段来实现。例如,我们在汽车轮胎上安装计数码盘,就可以得到轮胎转动的距离,从而得到汽车的估计。或者,也可以测量汽车的速度、加速度,通过时间积分来计算它的位移。完成这种运动估计的装置(包括硬件和算法)叫做里程计(Odometry)。 2.特性:里程计的特性? 里程计一个很重要的特性,是它只关心局部时间上的运动,多数时候是指两个时刻间的运动。当我们以某种间隔对时间进行采样时,就可估计运动物体在各时间间隔之内的运动。由于这个估计受噪声影响,先前时刻的估计误差,会累加到后面时间的运动之上,这种现象称为漂移(Drift)。 3.概念:什么是视觉里程计? 视觉里程计VO的目标是根据拍摄的图像估计相机的运动。它的主要方式分为特征点法和直接方法。其中,特征点方法目前占据主流,能够在噪声较大、相机运动较快时工作,但地图则是稀疏特征点;直接方法不需要提特征,能够建立稠密地图,但存在着计算量大、鲁棒性不好的缺陷。

1.概念:什么是里程计? 在里程计问题中,我们希望测量一个运动物体的轨迹。这可以通过许多不同的手段来实现。例如,我们在汽车轮胎上安装计数码盘,就可以得到轮胎转动的距离,从而得到汽车的估计。或者,也可以测量汽车的速度、加速度,通过时间积分来计算它的位移。完成这种运动估计的装置(包括硬件和算法)叫做里程计(Odometry)。 2.特性:里程计的特性? 里程计一个很重要的特性,是它只关心局部时间上的运动,多数时候是指两个时刻间的运动。当我们以某种间隔对时间进行采样时,就可估计运动物体在各时间间隔之内的运动。由于这个估计受噪声影响,先前时刻的估计误差,会累加到后面时间的运动之上,这种现象称为漂移(Drift)。 3.概念:什么是视觉里程计? 视觉里程计VO的目标是根据拍摄的图像估计相机的运动。它的主要方式分为特征点法和直接方法。其中,特征点方法目前占据主流,能够在噪声较大、相机运动较快时工作,但地图则是稀疏特征点;直接方法不需要提特征,能够建立稠密地图,但存在着计算量大、鲁棒性不好的缺陷。

复制

1.概念:什么是里程计? 在里程计问题中,我们希望测量一个运动物体的轨迹。这可以通过许多不同的手段来实现。例如,我们在汽车轮胎上安装计数码盘,就可以得到轮胎转动的距离,从而得到汽车的估计。或者,也可以测量汽车的速度、加速度,通过时间积分来计算它的位移。完成这种运动估计的装置(包括硬件和算法)叫做里程计(Odometry)。 2.特性:里程计的特性? 里程计一个很重要的特性,是它只关心局部时间上的运动,多数时候是指两个时刻间的运动。当我们以某种间隔对时间进行采样时,就可估计运动物体在各时间间隔之内的运动。由于这个估计受噪声影响,先前时刻的估计误差,会累加到后面时间的运动之上,这种现象称为漂移(Drift)。 3.概念:什么是视觉里程计? 视觉里程计VO的目标是根据拍摄的图像估计相机的运动。它的主要方式分为特征点法和直接方法。其中,特征点方法目前占据主流,能够在噪声较大、相机运动较快时工作,但地图则是稀疏特征点;直接方法不需要提特征,能够建立稠密地图,但存在着计算量大、鲁棒性不好的缺陷。

复制

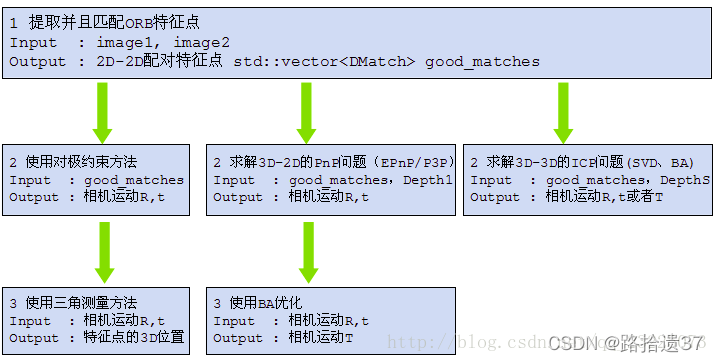

本章内容

1 特征点如何提取并且匹配

2 利用对极约束方法从 2 D − 2 D 2D-2D 2D−2D匹配好的特征点估计相机运动

3 使用三角测量方法从 2 D − 2 D 2D-2D 2D−2D匹配估计一个点的空间位置

4 3 D − 2 D 3D-2D 3D−2D的 P n P PnP PnP问题的线性解法和 B o u n d A d j u s t m e n t Bound Adjustment BoundAdjustment解法

5 3 D − 3 D 3D-3D 3D−3D的 I C P ICP ICP问题的线性解法和 B o u n d A d j u s t m e n t Bound Adjustment BoundAdjustment解法

本章内容 1 特征点如何提取并且匹配 2 利用对极约束方法从 2 D − 2 D 2D-2D 2D−2D匹配好的特征点估计相机运动 3 使用三角测量方法从 2 D − 2 D 2D-2D 2D−2D匹配估计一个点的空间位置 4 3 D − 2 D 3D-2D 3D−2D的 P n P PnP PnP问题的线性解法和 B o u n d A d j u s t m e n t Bound Adjustment BoundAdjustment解法 5 3 D − 3 D 3D-3D 3D−3D的 I C P ICP ICP问题的线性解法和 B o u n d A d j u s t m e n t Bound Adjustment BoundAdjustment解法

本章内容 1 特征点如何提取并且匹配 2 利用对极约束方法从 2 D − 2 D 2D-2D 2D−2D匹配好的特征点估计相机运动 3 使用三角测量方法从 2 D − 2 D 2D-2D 2D−2D匹配估计一个点的空间位置 4 3 D − 2 D 3D-2D 3D−2D的 P n P PnP PnP问题的线性解法和 B o u n d A d j u s t m e n t Bound Adjustment BoundAdjustment解法 5 3 D − 3 D 3D-3D 3D−3D的 I C P ICP ICP问题的线性解法和 B o u n d A d j u s t m e n t Bound Adjustment BoundAdjustment解法

复制

本章内容 1 特征点如何提取并且匹配 2 利用对极约束方法从 2 D − 2 D 2D-2D 2D−2D匹配好的特征点估计相机运动 3 使用三角测量方法从 2 D − 2 D 2D-2D 2D−2D匹配估计一个点的空间位置 4 3 D − 2 D 3D-2D 3D−2D的 P n P PnP PnP问题的线性解法和 B o u n d A d j u s t m e n t Bound Adjustment BoundAdjustment解法 5 3 D − 3 D 3D-3D 3D−3D的 I C P ICP ICP问题的线性解法和 B o u n d A d j u s t m e n t Bound Adjustment BoundAdjustment解法

复制

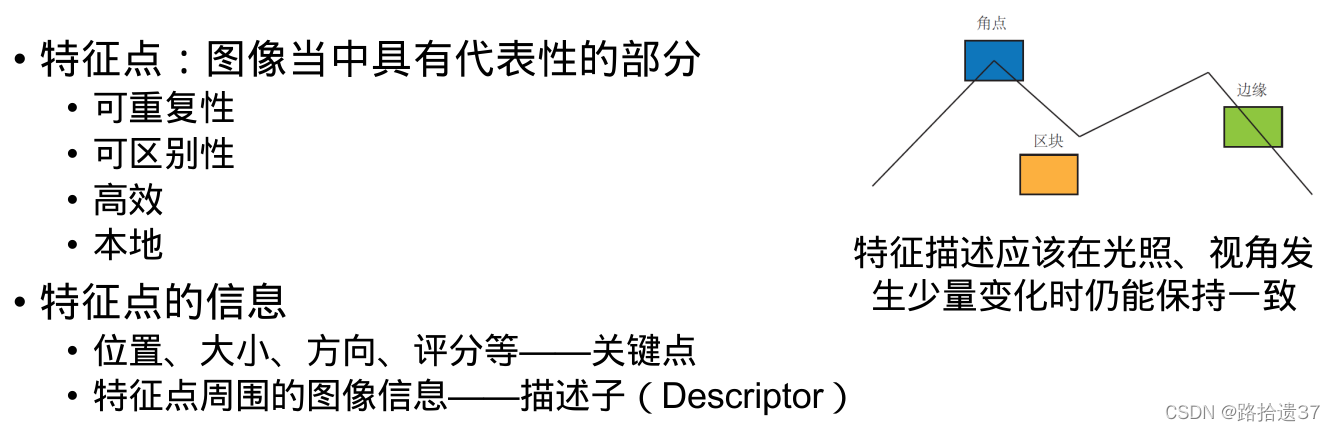

常见的特征点

SIFT 尺度不变特征变换

SURF

ORB 具有代表性的实时图像特征

提取ORB特征的两个步骤:

FAST角点提取

BRIEF描述子

FAST角点提取 BRIEF描述子

FAST角点提取 BRIEF描述子

复制

FAST角点提取 BRIEF描述子

复制

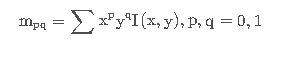

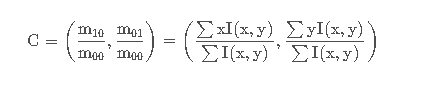

改进的FAST 关键点(Oriented FAST)

(FAST是一种角点,主要检测局部像素灰度变化明显的地方,以速度快著称。它的一个思想是:如果一个像素与邻域像素的差别较大(过亮或过暗),那么它更可能是角点。

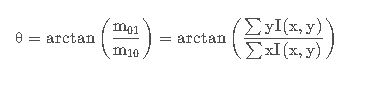

图像块的质心

特征点方向

BRIEF(Binary Robust Independent Elementary Feature) 是二进制描述子,它的描述向量是由许多个0和1组成,这里的0和1编码了关键点附近的两个像素 p和 q 的大小关系,如果 p > q p>q p>q,则取1;反之取0。

大体上按照某种概率分布,随机的挑选 p和 q 的位置,最终选取128对这样的 p p p和 q q q构成128维向量

大体上按照某种概率分布,随机的挑选 p和 q 的位置,最终选取128对这样的 p p p和 q q q构成128维向量

大体上按照某种概率分布,随机的挑选 p和 q 的位置,最终选取128对这样的 p p p和 q q q构成128维向量

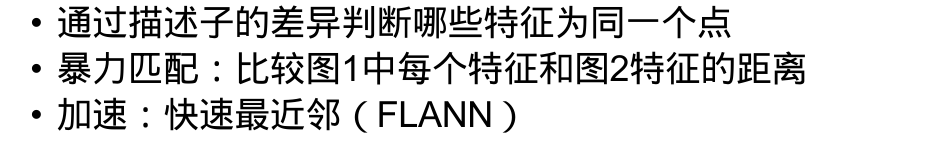

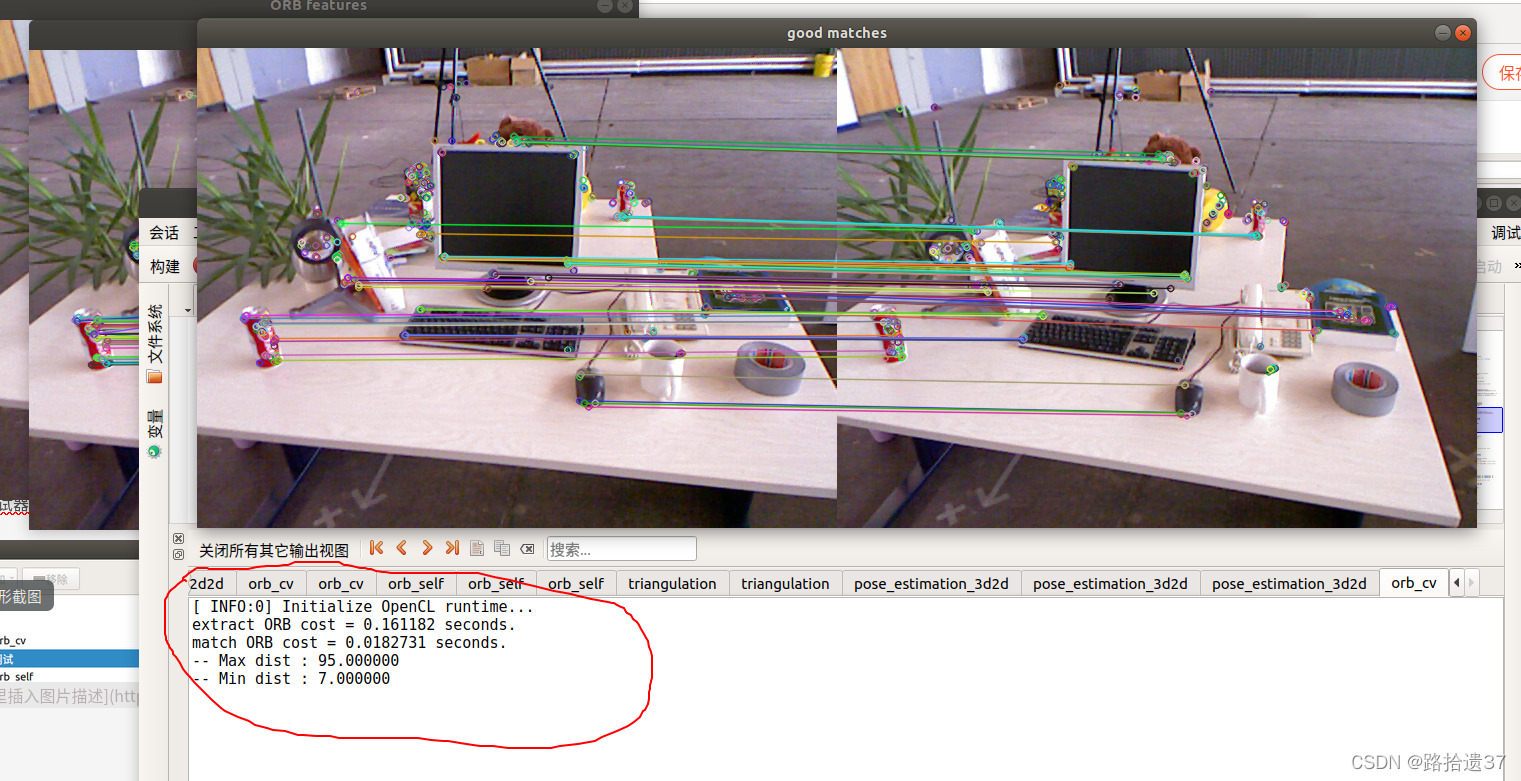

特征匹配

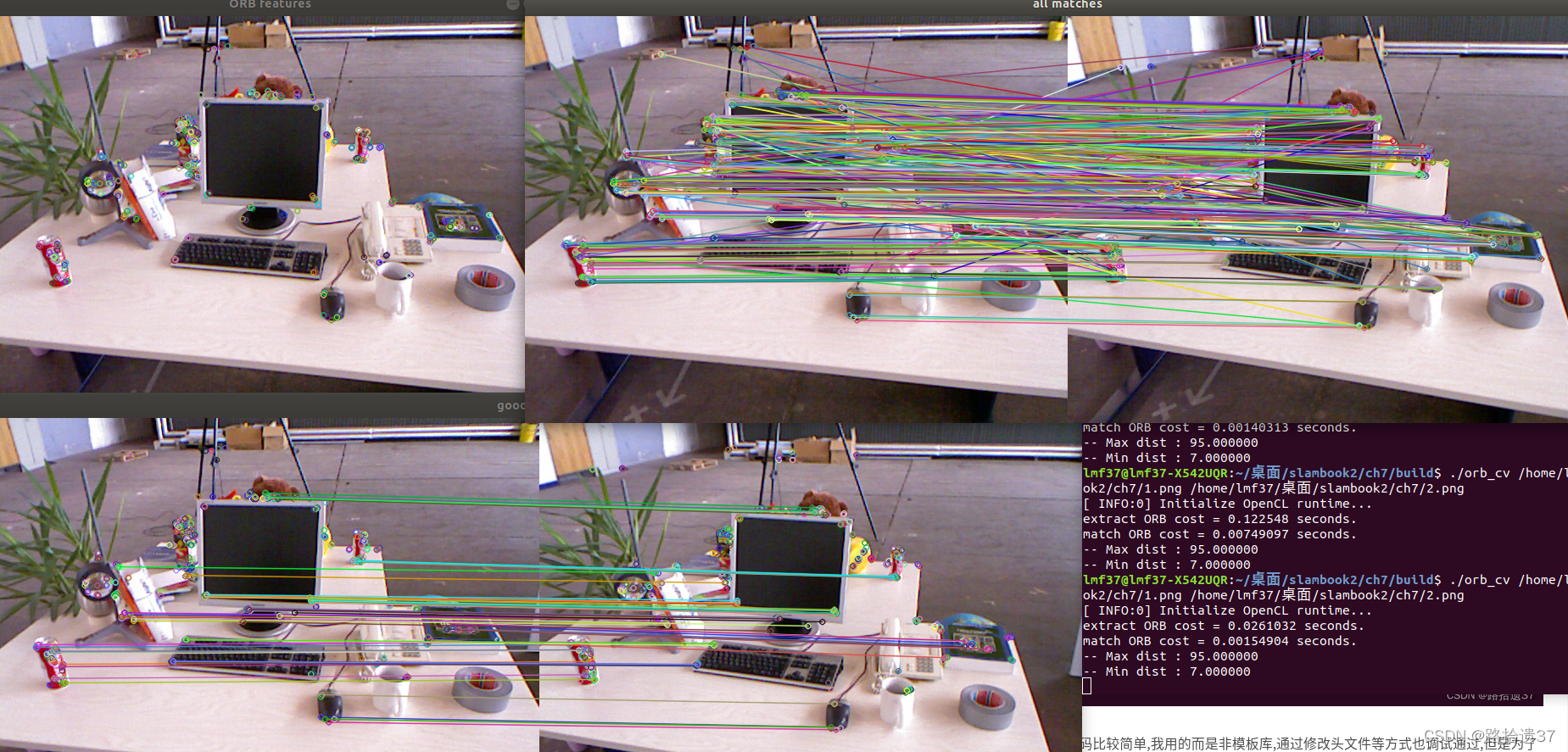

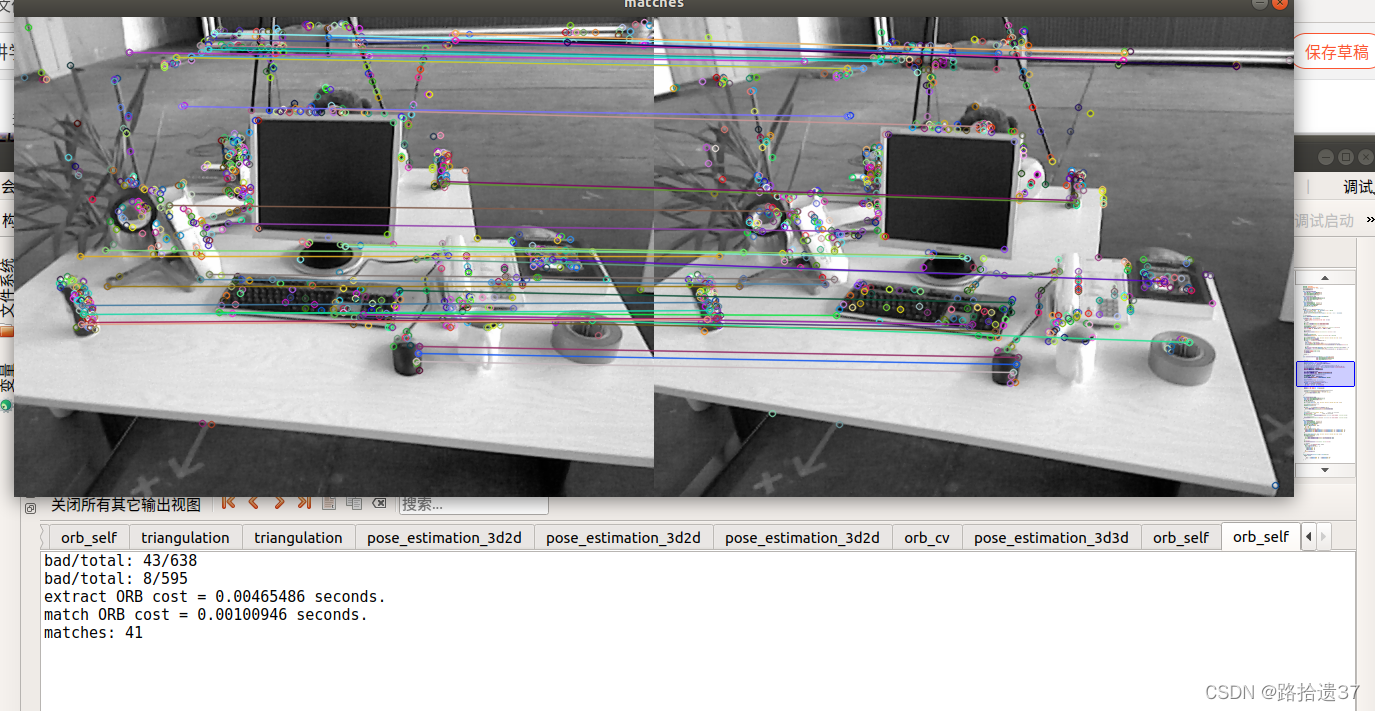

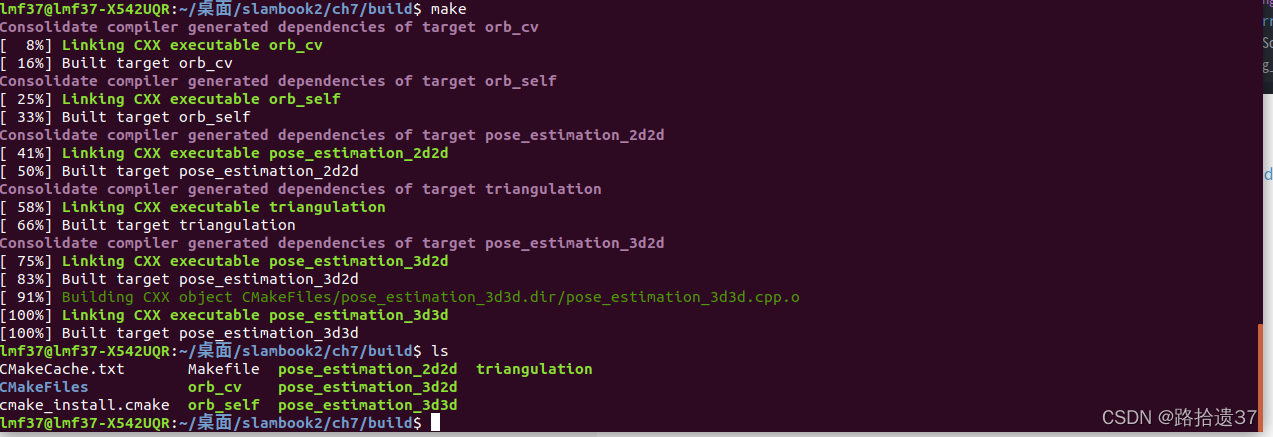

编译运行代码结果如下:

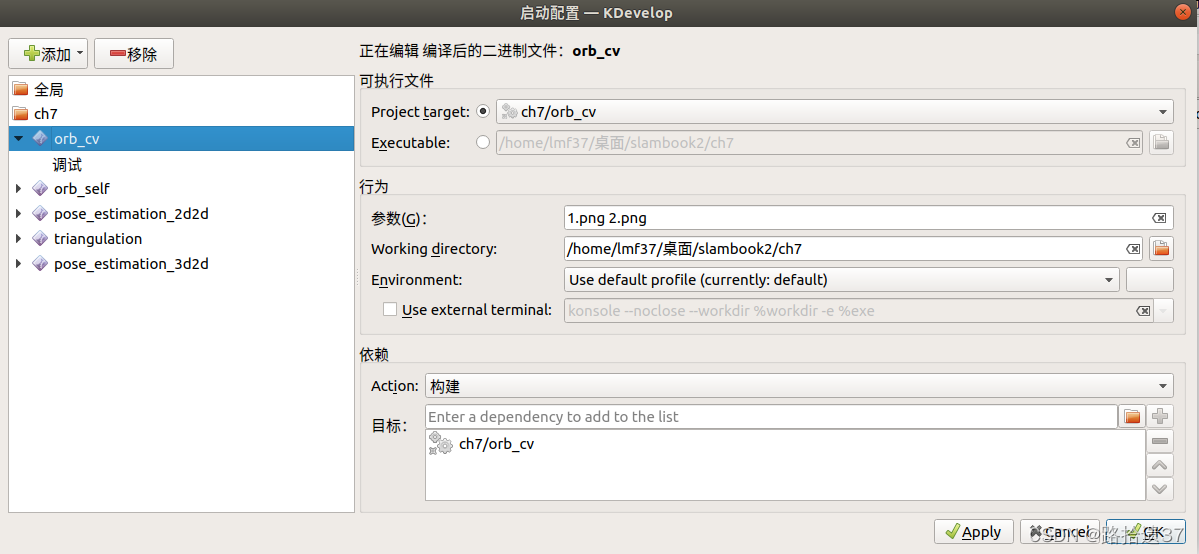

通过KDevelop调试 注意参数的传递

注意参数的传递

注意参数的传递

同时调试器要改为 GDB

GDB

结果:

GDB

结果: 代码及注释如下: 代码及注释如下:

#include <iostream>

#include <opencv2/core/core.hpp>

#include <opencv2/features2d/features2d.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <chrono>

using namespace std;

using namespace cv;

int main(int argc, char **argv) {

if (argc != 3) {

cout << "usage: feature_extraction img1 img2" << endl;

return 1;

}

//-- 读取图像

Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR);

Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR);

assert(img_1.data != nullptr && img_2.data != nullptr);

//-- 初始化

std::vector<KeyPoint> keypoints_1, keypoints_2; //关键点

Mat descriptors_1, descriptors_2; //描述子

Ptr<FeatureDetector> detector = ORB::create();//Oriented FAST 角点

Ptr<DescriptorExtractor> descriptor = ORB::create();// BRIEF 描述子

Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming"); //汉明距离

//-- 第一步:检测 Oriented FAST 角点位置

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

detector->detect(img_1, keypoints_1);

detector->detect(img_2, keypoints_2);

//-- 第二步:根据角点位置计算 BRIEF 描述子

descriptor->compute(img_1, keypoints_1, descriptors_1);

descriptor->compute(img_2, keypoints_2, descriptors_2);

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "extract ORB cost = " << time_used.count() << " seconds. " << endl;

Mat outimg1;

drawKeypoints(img_1, keypoints_1, outimg1, Scalar::all(-1), DrawMatchesFlags::DEFAULT);

imshow("ORB features", outimg1);

//-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离

vector<DMatch> matches; //未筛选匹配

t1 = chrono::steady_clock::now();

matcher->match(descriptors_1, descriptors_2, matches);

t2 = chrono::steady_clock::now();

time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "match ORB cost = " << time_used.count() << " seconds. " << endl;

//-- 第四步:匹配点对筛选

// 计算最小距离和最大距离

auto min_max = minmax_element(matches.begin(), matches.end(),

[](const DMatch &m1, const DMatch &m2) { return m1.distance < m2.distance; });

double min_dist = min_max.first->distance;

double max_dist = min_max.second->distance;

printf("-- Max dist : %f \n", max_dist);

printf("-- Min dist : %f \n", min_dist);

//当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限.

std::vector<DMatch> good_matches; //筛选匹配,汉明距离小于最小距离的两倍

for (int i = 0; i < descriptors_1.rows; i++) {

if (matches[i].distance <= max(2 * min_dist, 30.0)) {

good_matches.push_back(matches[i]);

}

}

//-- 第五步:绘制匹配结果

Mat img_match;

Mat img_goodmatch;

drawMatches(img_1, keypoints_1, img_2, keypoints_2, matches, img_match);

drawMatches(img_1, keypoints_1, img_2, keypoints_2, good_matches, img_goodmatch);

imshow("all matches", img_match);

imshow("good matches", img_goodmatch);

waitKey(0);

return 0;

}

#include <iostream> #include <opencv2/core/core.hpp> #include <opencv2/features2d/features2d.hpp> #include <opencv2/highgui/highgui.hpp> #include <chrono> using namespace std; using namespace cv; int main(int argc, char **argv) { if (argc != 3) { cout << "usage: feature_extraction img1 img2" << endl; return 1; } //-- 读取图像 Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR); Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR); assert(img_1.data != nullptr && img_2.data != nullptr); //-- 初始化 std::vector<KeyPoint> keypoints_1, keypoints_2; //关键点 Mat descriptors_1, descriptors_2; //描述子 Ptr<FeatureDetector> detector = ORB::create();//Oriented FAST 角点 Ptr<DescriptorExtractor> descriptor = ORB::create();// BRIEF 描述子 Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming"); //汉明距离 //-- 第一步:检测 Oriented FAST 角点位置 chrono::steady_clock::time_point t1 = chrono::steady_clock::now(); detector->detect(img_1, keypoints_1); detector->detect(img_2, keypoints_2); //-- 第二步:根据角点位置计算 BRIEF 描述子 descriptor->compute(img_1, keypoints_1, descriptors_1); descriptor->compute(img_2, keypoints_2, descriptors_2); chrono::steady_clock::time_point t2 = chrono::steady_clock::now(); chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1); cout << "extract ORB cost = " << time_used.count() << " seconds. " << endl; Mat outimg1; drawKeypoints(img_1, keypoints_1, outimg1, Scalar::all(-1), DrawMatchesFlags::DEFAULT); imshow("ORB features", outimg1); //-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离 vector<DMatch> matches; //未筛选匹配 t1 = chrono::steady_clock::now(); matcher->match(descriptors_1, descriptors_2, matches); t2 = chrono::steady_clock::now(); time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1); cout << "match ORB cost = " << time_used.count() << " seconds. " << endl; //-- 第四步:匹配点对筛选 // 计算最小距离和最大距离 auto min_max = minmax_element(matches.begin(), matches.end(), [](const DMatch &m1, const DMatch &m2) { return m1.distance < m2.distance; }); double min_dist = min_max.first->distance; double max_dist = min_max.second->distance; printf("-- Max dist : %f \n", max_dist); printf("-- Min dist : %f \n", min_dist); //当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限. std::vector<DMatch> good_matches; //筛选匹配,汉明距离小于最小距离的两倍 for (int i = 0; i < descriptors_1.rows; i++) { if (matches[i].distance <= max(2 * min_dist, 30.0)) { good_matches.push_back(matches[i]); } } //-- 第五步:绘制匹配结果 Mat img_match; Mat img_goodmatch; drawMatches(img_1, keypoints_1, img_2, keypoints_2, matches, img_match); drawMatches(img_1, keypoints_1, img_2, keypoints_2, good_matches, img_goodmatch); imshow("all matches", img_match); imshow("good matches", img_goodmatch); waitKey(0); return 0; }

#include <iostream> #include <opencv2/core/core.hpp> #include <opencv2/features2d/features2d.hpp> #include <opencv2/highgui/highgui.hpp> #include <chrono> using namespace std; using namespace cv; int main(int argc, char **argv) { if (argc != 3) { cout << "usage: feature_extraction img1 img2" << endl; return 1; } //-- 读取图像 Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR); Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR); assert(img_1.data != nullptr && img_2.data != nullptr); //-- 初始化 std::vector<KeyPoint> keypoints_1, keypoints_2; //关键点 Mat descriptors_1, descriptors_2; //描述子 Ptr<FeatureDetector> detector = ORB::create();//Oriented FAST 角点 Ptr<DescriptorExtractor> descriptor = ORB::create();// BRIEF 描述子 Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming"); //汉明距离 //-- 第一步:检测 Oriented FAST 角点位置 chrono::steady_clock::time_point t1 = chrono::steady_clock::now(); detector->detect(img_1, keypoints_1); detector->detect(img_2, keypoints_2); //-- 第二步:根据角点位置计算 BRIEF 描述子 descriptor->compute(img_1, keypoints_1, descriptors_1); descriptor->compute(img_2, keypoints_2, descriptors_2); chrono::steady_clock::time_point t2 = chrono::steady_clock::now(); chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1); cout << "extract ORB cost = " << time_used.count() << " seconds. " << endl; Mat outimg1; drawKeypoints(img_1, keypoints_1, outimg1, Scalar::all(-1), DrawMatchesFlags::DEFAULT); imshow("ORB features", outimg1); //-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离 vector<DMatch> matches; //未筛选匹配 t1 = chrono::steady_clock::now(); matcher->match(descriptors_1, descriptors_2, matches); t2 = chrono::steady_clock::now(); time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1); cout << "match ORB cost = " << time_used.count() << " seconds. " << endl; //-- 第四步:匹配点对筛选 // 计算最小距离和最大距离 auto min_max = minmax_element(matches.begin(), matches.end(), [](const DMatch &m1, const DMatch &m2) { return m1.distance < m2.distance; }); double min_dist = min_max.first->distance; double max_dist = min_max.second->distance; printf("-- Max dist : %f \n", max_dist); printf("-- Min dist : %f \n", min_dist); //当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限. std::vector<DMatch> good_matches; //筛选匹配,汉明距离小于最小距离的两倍 for (int i = 0; i < descriptors_1.rows; i++) { if (matches[i].distance <= max(2 * min_dist, 30.0)) { good_matches.push_back(matches[i]); } } //-- 第五步:绘制匹配结果 Mat img_match; Mat img_goodmatch; drawMatches(img_1, keypoints_1, img_2, keypoints_2, matches, img_match); drawMatches(img_1, keypoints_1, img_2, keypoints_2, good_matches, img_goodmatch); imshow("all matches", img_match); imshow("good matches", img_goodmatch); waitKey(0); return 0; }

复制

#include <iostream> #include <opencv2/core/core.hpp> #include <opencv2/features2d/features2d.hpp> #include <opencv2/highgui/highgui.hpp> #include <chrono> using namespace std; using namespace cv; int main(int argc, char **argv) { if (argc != 3) { cout << "usage: feature_extraction img1 img2" << endl; return 1; } //-- 读取图像 Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR); Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR); assert(img_1.data != nullptr && img_2.data != nullptr); //-- 初始化 std::vector<KeyPoint> keypoints_1, keypoints_2; //关键点 Mat descriptors_1, descriptors_2; //描述子 Ptr<FeatureDetector> detector = ORB::create();//Oriented FAST 角点 Ptr<DescriptorExtractor> descriptor = ORB::create();// BRIEF 描述子 Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming"); //汉明距离 //-- 第一步:检测 Oriented FAST 角点位置 chrono::steady_clock::time_point t1 = chrono::steady_clock::now(); detector->detect(img_1, keypoints_1); detector->detect(img_2, keypoints_2); //-- 第二步:根据角点位置计算 BRIEF 描述子 descriptor->compute(img_1, keypoints_1, descriptors_1); descriptor->compute(img_2, keypoints_2, descriptors_2); chrono::steady_clock::time_point t2 = chrono::steady_clock::now(); chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1); cout << "extract ORB cost = " << time_used.count() << " seconds. " << endl; Mat outimg1; drawKeypoints(img_1, keypoints_1, outimg1, Scalar::all(-1), DrawMatchesFlags::DEFAULT); imshow("ORB features", outimg1); //-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离 vector<DMatch> matches; //未筛选匹配 t1 = chrono::steady_clock::now(); matcher->match(descriptors_1, descriptors_2, matches); t2 = chrono::steady_clock::now(); time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1); cout << "match ORB cost = " << time_used.count() << " seconds. " << endl; //-- 第四步:匹配点对筛选 // 计算最小距离和最大距离 auto min_max = minmax_element(matches.begin(), matches.end(), [](const DMatch &m1, const DMatch &m2) { return m1.distance < m2.distance; }); double min_dist = min_max.first->distance; double max_dist = min_max.second->distance; printf("-- Max dist : %f \n", max_dist); printf("-- Min dist : %f \n", min_dist); //当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限. std::vector<DMatch> good_matches; //筛选匹配,汉明距离小于最小距离的两倍 for (int i = 0; i < descriptors_1.rows; i++) { if (matches[i].distance <= max(2 * min_dist, 30.0)) { good_matches.push_back(matches[i]); } } //-- 第五步:绘制匹配结果 Mat img_match; Mat img_goodmatch; drawMatches(img_1, keypoints_1, img_2, keypoints_2, matches, img_match); drawMatches(img_1, keypoints_1, img_2, keypoints_2, good_matches, img_goodmatch); imshow("all matches", img_match); imshow("good matches", img_goodmatch); waitKey(0); return 0; }

复制

复制

复制

手写ORB结果:

此部分还没完全理解,留一个坑,55555,

此部分还没完全理解,留一个坑,55555,

此部分还没完全理解,留一个坑,55555,

复制

此部分还没完全理解,留一个坑,55555,

复制

复制

复制

7.3 2D-3D:对极问题

2D2D,2D-3D,三角测量理论部分参考博客:

2D2D,2D-3D,三角测量理论部分参考博客:

视觉里程计1

编译运行代码结果如下:

[ INFO:0] Initialize OpenCL runtime...

-- Max dist : 95.000000

-- Min dist : 7.000000

一共找到了81组匹配点

fundamental_matrix is

[5.435453065936294e-06, 0.0001366043242989641, -0.02140890086948122;

-0.0001321142229824704, 2.339475702778057e-05, -0.006332906454396256;

0.02107630352202776, -0.00366683395295285, 1]

essential_matrix is

[0.01724015832721706, 0.328054335794133, 0.0473747783144249;

-0.3243229585962962, 0.03292958445202408, -0.6262554366073018;

-0.005885857752320116, 0.6253830041920333, 0.0153167864909267]

homography_matrix is

[0.91317517918067, -0.1092435315821776, 29.95860009981271;

0.02223560352310949, 0.9826008005061946, 6.50891083956826;

-0.0001001560381023939, 0.0001037779436396116, 1]

R is

[0.9985534106102478, -0.05339308467584758, 0.006345444621108698;

0.05321959721496264, 0.9982715997131746, 0.02492965459802003;

-0.007665548311697523, -0.02455588961730239, 0.9996690690694516]

t is

[-0.8829934995085544;

-0.05539655431450562;

0.4661048182498402]

t^R=

[-0.02438126572381045, -0.4639388908753606, -0.06699805400667856;

0.4586619266358499, -0.04656946493536188, 0.8856589319599302;

0.008323859859529846, -0.8844251262060034, -0.0216612071874423]

epipolar constraint = [0.004334754136721797]

epipolar constraint = [-0.0002809243685121809]

epipolar constraint = [-0.001438247945977744]

epipolar constraint = [0.0003269033947393973]

epipolar constraint = [-0.0003553231638489529]

epipolar constraint = [0.001284545296795364]

epipolar constraint = [0.0007111119070243033]

epipolar constraint = [0.0005809963024551446]

epipolar constraint = [-0.0004569505410570683]

epipolar constraint = [-0.0001985091674428126]

epipolar constraint = [0.0009954466629851014]

epipolar constraint = [0.004183444398105557]

epipolar constraint = [0.0003301500278483499]

epipolar constraint = [0.000433468422895756]

epipolar constraint = [0.002166463717508241]

epipolar constraint = [0.0008612142972820036]

epipolar constraint = [0.006260134367574832]

epipolar constraint = [0.007343864270669354]

epipolar constraint = [0.0006997299583792263]

epipolar constraint = [-0.0002735148772005716]

epipolar constraint = [-5.272337012321437e-07]

epipolar constraint = [0.0007372565015377822]

epipolar constraint = [-0.0006697357792934122]

epipolar constraint = [0.003123484301720714]

epipolar constraint = [-0.001231690598807428]

epipolar constraint = [0.0002668695748940936]

epipolar constraint = [0.004005543462373876]

epipolar constraint = [0.0003056705066544624]

epipolar constraint = [-0.003108414268527718]

epipolar constraint = [-1.915351944714594e-06]

epipolar constraint = [0.0006933459945522302]

epipolar constraint = [-1.20842520190817e-05]

epipolar constraint = [0.001931054288970224]

epipolar constraint = [-0.001327411567348349]

epipolar constraint = [-0.001158918062891631]

epipolar constraint = [-0.001858904428330923]

epipolar constraint = [0.0001556314587936695]

epipolar constraint = [-0.002373723544446836]

epipolar constraint = [0.004805889122330598]

epipolar constraint = [-0.0009747832347852675]

epipolar constraint = [0.0006571155616519331]

epipolar constraint = [0.002337204394122716]

epipolar constraint = [0.004765516758509947]

epipolar constraint = [-0.001750870050808317]

epipolar constraint = [0.001092859392827487]

epipolar constraint = [0.0006769492505509164]

epipolar constraint = [0.0003307429644405918]

epipolar constraint = [0.001876564994448115]

epipolar constraint = [0.001832354276950641]

epipolar constraint = [7.744615696386736e-07]

epipolar constraint = [-0.0007964236477854686]

epipolar constraint = [0.0001236409258935089]

epipolar constraint = [1.386843227244028e-06]

epipolar constraint = [0.0001450355326295741]

epipolar constraint = [0.00109731208246224]

epipolar constraint = [-0.002227053499071974]

epipolar constraint = [0.002240603253167585]

epipolar constraint = [0.0002359213388878484]

epipolar constraint = [0.003035338951631217]

epipolar constraint = [0.002650788007581513]

epipolar constraint = [-0.0002311129052587763]

epipolar constraint = [0.003158726652554816]

epipolar constraint = [0.00318443380492206]

epipolar constraint = [-0.0003030993775385848]

epipolar constraint = [-0.004151424678597325]

epipolar constraint = [0.000962908812924268]

epipolar constraint = [0.00166825104315349]

epipolar constraint = [-0.001953002815440141]

epipolar constraint = [-0.0024677215713114]

epipolar constraint = [0.0008218799177506786]

epipolar constraint = [1.369310688559278e-06]

epipolar constraint = [-0.001891259735584946]

epipolar constraint = [-0.0001304333417016107]

epipolar constraint = [0.0002565573533065552]

epipolar constraint = [0.006010380518284446]

epipolar constraint = [-0.0004837802950156331]

epipolar constraint = [0.00547529124350349]

epipolar constraint = [0.001722398975726524]

epipolar constraint = [-0.0004945848903547823]

epipolar constraint = [-0.004259380631293275]

epipolar constraint = [-0.0007738612238716719]

*** 已完成 ***

代码及注释如下: (部分类的调用没能明白,轻置小坑)

部分类的调用没能明白,轻置小坑

部分类的调用没能明白,轻置小坑

部分类的调用没能明白,轻置小坑制 )

#include <iostream>

#include <opencv2/core/core.hpp>

#include <opencv2/features2d/features2d.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/calib3d/calib3d.hpp>

// #include "extra.h" // use this if in OpenCV2

using namespace std;

using namespace cv;

/****************************************************

* 本程序演示了如何使用2D-2D的特征匹配估计相机运动

* **************************************************/

void find_feature_matches(

const Mat &img_1, const Mat &img_2,

std::vector<KeyPoint> &keypoints_1,

std::vector<KeyPoint> &keypoints_2,

std::vector<DMatch> &matches);

void pose_estimation_2d2d(

std::vector<KeyPoint> keypoints_1,

std::vector<KeyPoint> keypoints_2,

std::vector<DMatch> matches,

Mat &R, Mat &t);

// 像素坐标转相机归一化坐标

Point2d pixel2cam(const Point2d &p, const Mat &K);

int main(int argc, char **argv) {

if (argc != 3) {

cout << "usage: pose_estimation_2d2d img1 img2" << endl;

return 1;

}

//-- 读取图像

Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR);

Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR);

assert(img_1.data && img_2.data && "Can not load images!");

vector<KeyPoint> keypoints_1, keypoints_2;

vector<DMatch> matches;

find_feature_matches(img_1, img_2, keypoints_1, keypoints_2, matches);

cout << "一共找到了" << matches.size() << "组匹配点" << endl;

//-- 估计两张图像间运动

Mat R, t;

pose_estimation_2d2d(keypoints_1, keypoints_2, matches, R, t);

//-- 验证E=t^R*scale

Mat t_x =

(Mat_<double>(3, 3) << 0, -t.at<double>(2, 0), t.at<double>(1, 0),

t.at<double>(2, 0), 0, -t.at<double>(0, 0),

-t.at<double>(1, 0), t.at<double>(0, 0), 0);

cout << "t^R=" << endl << t_x * R << endl;

//-- 验证对极约束

Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1);

for (DMatch m: matches) {

Point2d pt1 = pixel2cam(keypoints_1[m.queryIdx].pt, K);

Mat y1 = (Mat_<double>(3, 1) << pt1.x, pt1.y, 1);

Point2d pt2 = pixel2cam(keypoints_2[m.trainIdx].pt, K);

Mat y2 = (Mat_<double>(3, 1) << pt2.x, pt2.y, 1);

Mat d = y2.t() * t_x * R * y1;

cout << "epipolar constraint = " << d << endl;

}

return 0;

}

void find_feature_matches(const Mat &img_1, const Mat &img_2,

std::vector<KeyPoint> &keypoints_1,

std::vector<KeyPoint> &keypoints_2,

std::vector<DMatch> &matches) {

//-- 初始化

Mat descriptors_1, descriptors_2;

// used in OpenCV3

Ptr<FeatureDetector> detector = ORB::create();

Ptr<DescriptorExtractor> descriptor = ORB::create();

// use this if you are in OpenCV2

// Ptr<FeatureDetector> detector = FeatureDetector::create ( "ORB" );

// Ptr<DescriptorExtractor> descriptor = DescriptorExtractor::create ( "ORB" );

Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming");

//-- 第一步:检测 Oriented FAST 角点位置

detector->detect(img_1, keypoints_1);

detector->detect(img_2, keypoints_2);

//-- 第二步:根据角点位置计算 BRIEF 描述子

descriptor->compute(img_1, keypoints_1, descriptors_1);

descriptor->compute(img_2, keypoints_2, descriptors_2);

//-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离

vector<DMatch> match;

//BFMatcher matcher ( NORM_HAMMING );

matcher->match(descriptors_1, descriptors_2, match);

//-- 第四步:匹配点对筛选

double min_dist = 10000, max_dist = 0;

//找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离

for (int i = 0; i < descriptors_1.rows; i++) {

double dist = match[i].distance;

if (dist < min_dist) min_dist = dist;

if (dist > max_dist) max_dist = dist;

}

printf("-- Max dist : %f \n", max_dist);

printf("-- Min dist : %f \n", min_dist);

//当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限.

for (int i = 0; i < descriptors_1.rows; i++) {

if (match[i].distance <= max(2 * min_dist, 30.0)) {

matches.push_back(match[i]);

}

}

}

Point2d pixel2cam(const Point2d &p, const Mat &K) {

return Point2d

(

(p.x - K.at<double>(0, 2)) / K.at<double>(0, 0),

(p.y - K.at<double>(1, 2)) / K.at<double>(1, 1)

);

}

void pose_estimation_2d2d(std::vector<KeyPoint> keypoints_1,

std::vector<KeyPoint> keypoints_2,

std::vector<DMatch> matches,

Mat &R, Mat &t) {

// 相机内参,TUM Freiburg2

Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1);

//-- 把匹配点转换为vector<Point2f>的形式

vector<Point2f> points1;

vector<Point2f> points2;

for (int i = 0; i < (int) matches.size(); i++) {

points1.push_back(keypoints_1[matches[i].queryIdx].pt);

points2.push_back(keypoints_2[matches[i].trainIdx].pt);

}

//-- 计算基础矩阵

Mat fundamental_matrix;

fundamental_matrix = findFundamentalMat(points1, points2, CV_FM_8POINT);

cout << "fundamental_matrix is " << endl << fundamental_matrix << endl;

//-- 计算本质矩阵

Point2d principal_point(325.1, 249.7); //相机光心, TUM dataset标定值

double focal_length = 521; //相机焦距, TUM dataset标定值

Mat essential_matrix;

essential_matrix = findEssentialMat(points1, points2, focal_length, principal_point);

cout << "essential_matrix is " << endl << essential_matrix << endl;

//-- 计算单应矩阵

//-- 但是本例中场景不是平面,单应矩阵意义不大

Mat homography_matrix;

homography_matrix = findHomography(points1, points2, RANSAC, 3);

cout << "homography_matrix is " << endl << homography_matrix << endl;

//-- 从本质矩阵中恢复旋转和平移信息.

// 此函数仅在Opencv3中提供

recoverPose(essential_matrix, points1, points2, R, t, focal_length, principal_point);

cout << "R is " << endl << R << endl;

cout << "t is " << endl << t << endl;

}

#include <iostream> #include <opencv2/core/core.hpp> #include <opencv2/features2d/features2d.hpp> #include <opencv2/highgui/highgui.hpp> #include <opencv2/calib3d/calib3d.hpp> // #include "extra.h" // use this if in OpenCV2 using namespace std; using namespace cv; /**************************************************** * 本程序演示了如何使用2D-2D的特征匹配估计相机运动 * **************************************************/ void find_feature_matches( const Mat &img_1, const Mat &img_2, std::vector<KeyPoint> &keypoints_1, std::vector<KeyPoint> &keypoints_2, std::vector<DMatch> &matches); void pose_estimation_2d2d( std::vector<KeyPoint> keypoints_1, std::vector<KeyPoint> keypoints_2, std::vector<DMatch> matches, Mat &R, Mat &t); // 像素坐标转相机归一化坐标 Point2d pixel2cam(const Point2d &p, const Mat &K); int main(int argc, char **argv) { if (argc != 3) { cout << "usage: pose_estimation_2d2d img1 img2" << endl; return 1; } //-- 读取图像 Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR); Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR); assert(img_1.data && img_2.data && "Can not load images!"); vector<KeyPoint> keypoints_1, keypoints_2; vector<DMatch> matches; find_feature_matches(img_1, img_2, keypoints_1, keypoints_2, matches); cout << "一共找到了" << matches.size() << "组匹配点" << endl; //-- 估计两张图像间运动 Mat R, t; pose_estimation_2d2d(keypoints_1, keypoints_2, matches, R, t); //-- 验证E=t^R*scale Mat t_x = (Mat_<double>(3, 3) << 0, -t.at<double>(2, 0), t.at<double>(1, 0), t.at<double>(2, 0), 0, -t.at<double>(0, 0), -t.at<double>(1, 0), t.at<double>(0, 0), 0); cout << "t^R=" << endl << t_x * R << endl; //-- 验证对极约束 Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1); for (DMatch m: matches) { Point2d pt1 = pixel2cam(keypoints_1[m.queryIdx].pt, K); Mat y1 = (Mat_<double>(3, 1) << pt1.x, pt1.y, 1); Point2d pt2 = pixel2cam(keypoints_2[m.trainIdx].pt, K); Mat y2 = (Mat_<double>(3, 1) << pt2.x, pt2.y, 1); Mat d = y2.t() * t_x * R * y1; cout << "epipolar constraint = " << d << endl; } return 0; } void find_feature_matches(const Mat &img_1, const Mat &img_2, std::vector<KeyPoint> &keypoints_1, std::vector<KeyPoint> &keypoints_2, std::vector<DMatch> &matches) { //-- 初始化 Mat descriptors_1, descriptors_2; // used in OpenCV3 Ptr<FeatureDetector> detector = ORB::create(); Ptr<DescriptorExtractor> descriptor = ORB::create(); // use this if you are in OpenCV2 // Ptr<FeatureDetector> detector = FeatureDetector::create ( "ORB" ); // Ptr<DescriptorExtractor> descriptor = DescriptorExtractor::create ( "ORB" ); Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming"); //-- 第一步:检测 Oriented FAST 角点位置 detector->detect(img_1, keypoints_1); detector->detect(img_2, keypoints_2); //-- 第二步:根据角点位置计算 BRIEF 描述子 descriptor->compute(img_1, keypoints_1, descriptors_1); descriptor->compute(img_2, keypoints_2, descriptors_2); //-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离 vector<DMatch> match; //BFMatcher matcher ( NORM_HAMMING ); matcher->match(descriptors_1, descriptors_2, match); //-- 第四步:匹配点对筛选 double min_dist = 10000, max_dist = 0; //找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离 for (int i = 0; i < descriptors_1.rows; i++) { double dist = match[i].distance; if (dist < min_dist) min_dist = dist; if (dist > max_dist) max_dist = dist; } printf("-- Max dist : %f \n", max_dist); printf("-- Min dist : %f \n", min_dist); //当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限. for (int i = 0; i < descriptors_1.rows; i++) { if (match[i].distance <= max(2 * min_dist, 30.0)) { matches.push_back(match[i]); } } } Point2d pixel2cam(const Point2d &p, const Mat &K) { return Point2d ( (p.x - K.at<double>(0, 2)) / K.at<double>(0, 0), (p.y - K.at<double>(1, 2)) / K.at<double>(1, 1) ); } void pose_estimation_2d2d(std::vector<KeyPoint> keypoints_1, std::vector<KeyPoint> keypoints_2, std::vector<DMatch> matches, Mat &R, Mat &t) { // 相机内参,TUM Freiburg2 Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1); //-- 把匹配点转换为vector<Point2f>的形式 vector<Point2f> points1; vector<Point2f> points2; for (int i = 0; i < (int) matches.size(); i++) { points1.push_back(keypoints_1[matches[i].queryIdx].pt); points2.push_back(keypoints_2[matches[i].trainIdx].pt); } //-- 计算基础矩阵 Mat fundamental_matrix; fundamental_matrix = findFundamentalMat(points1, points2, CV_FM_8POINT); cout << "fundamental_matrix is " << endl << fundamental_matrix << endl; //-- 计算本质矩阵 Point2d principal_point(325.1, 249.7); //相机光心, TUM dataset标定值 double focal_length = 521; //相机焦距, TUM dataset标定值 Mat essential_matrix; essential_matrix = findEssentialMat(points1, points2, focal_length, principal_point); cout << "essential_matrix is " << endl << essential_matrix << endl; //-- 计算单应矩阵 //-- 但是本例中场景不是平面,单应矩阵意义不大 Mat homography_matrix; homography_matrix = findHomography(points1, points2, RANSAC, 3); cout << "homography_matrix is " << endl << homography_matrix << endl; //-- 从本质矩阵中恢复旋转和平移信息. // 此函数仅在Opencv3中提供 recoverPose(essential_matrix, points1, points2, R, t, focal_length, principal_point); cout << "R is " << endl << R << endl; cout << "t is " << endl << t << endl; }

#include <iostream> #include <opencv2/core/core.hpp> #include <opencv2/features2d/features2d.hpp> #include <opencv2/highgui/highgui.hpp> #include <opencv2/calib3d/calib3d.hpp> // #include "extra.h" // use this if in OpenCV2 using namespace std; using namespace cv; /**************************************************** * 本程序演示了如何使用2D-2D的特征匹配估计相机运动 * **************************************************/ void find_feature_matches( const Mat &img_1, const Mat &img_2, std::vector<KeyPoint> &keypoints_1, std::vector<KeyPoint> &keypoints_2, std::vector<DMatch> &matches); void pose_estimation_2d2d( std::vector<KeyPoint> keypoints_1, std::vector<KeyPoint> keypoints_2, std::vector<DMatch> matches, Mat &R, Mat &t); // 像素坐标转相机归一化坐标 Point2d pixel2cam(const Point2d &p, const Mat &K); int main(int argc, char **argv) { if (argc != 3) { cout << "usage: pose_estimation_2d2d img1 img2" << endl; return 1; } //-- 读取图像 Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR); Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR); assert(img_1.data && img_2.data && "Can not load images!"); vector<KeyPoint> keypoints_1, keypoints_2; vector<DMatch> matches; find_feature_matches(img_1, img_2, keypoints_1, keypoints_2, matches); cout << "一共找到了" << matches.size() << "组匹配点" << endl; //-- 估计两张图像间运动 Mat R, t; pose_estimation_2d2d(keypoints_1, keypoints_2, matches, R, t); //-- 验证E=t^R*scale Mat t_x = (Mat_<double>(3, 3) << 0, -t.at<double>(2, 0), t.at<double>(1, 0), t.at<double>(2, 0), 0, -t.at<double>(0, 0), -t.at<double>(1, 0), t.at<double>(0, 0), 0); cout << "t^R=" << endl << t_x * R << endl; //-- 验证对极约束 Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1); for (DMatch m: matches) { Point2d pt1 = pixel2cam(keypoints_1[m.queryIdx].pt, K); Mat y1 = (Mat_<double>(3, 1) << pt1.x, pt1.y, 1); Point2d pt2 = pixel2cam(keypoints_2[m.trainIdx].pt, K); Mat y2 = (Mat_<double>(3, 1) << pt2.x, pt2.y, 1); Mat d = y2.t() * t_x * R * y1; cout << "epipolar constraint = " << d << endl; } return 0; } void find_feature_matches(const Mat &img_1, const Mat &img_2, std::vector<KeyPoint> &keypoints_1, std::vector<KeyPoint> &keypoints_2, std::vector<DMatch> &matches) { //-- 初始化 Mat descriptors_1, descriptors_2; // used in OpenCV3 Ptr<FeatureDetector> detector = ORB::create(); Ptr<DescriptorExtractor> descriptor = ORB::create(); // use this if you are in OpenCV2 // Ptr<FeatureDetector> detector = FeatureDetector::create ( "ORB" ); // Ptr<DescriptorExtractor> descriptor = DescriptorExtractor::create ( "ORB" ); Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming"); //-- 第一步:检测 Oriented FAST 角点位置 detector->detect(img_1, keypoints_1); detector->detect(img_2, keypoints_2); //-- 第二步:根据角点位置计算 BRIEF 描述子 descriptor->compute(img_1, keypoints_1, descriptors_1); descriptor->compute(img_2, keypoints_2, descriptors_2); //-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离 vector<DMatch> match; //BFMatcher matcher ( NORM_HAMMING ); matcher->match(descriptors_1, descriptors_2, match); //-- 第四步:匹配点对筛选 double min_dist = 10000, max_dist = 0; //找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离 for (int i = 0; i < descriptors_1.rows; i++) { double dist = match[i].distance; if (dist < min_dist) min_dist = dist; if (dist > max_dist) max_dist = dist; } printf("-- Max dist : %f \n", max_dist); printf("-- Min dist : %f \n", min_dist); //当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限. for (int i = 0; i < descriptors_1.rows; i++) { if (match[i].distance <= max(2 * min_dist, 30.0)) { matches.push_back(match[i]); } } } Point2d pixel2cam(const Point2d &p, const Mat &K) { return Point2d ( (p.x - K.at<double>(0, 2)) / K.at<double>(0, 0), (p.y - K.at<double>(1, 2)) / K.at<double>(1, 1) ); } void pose_estimation_2d2d(std::vector<KeyPoint> keypoints_1, std::vector<KeyPoint> keypoints_2, std::vector<DMatch> matches, Mat &R, Mat &t) { // 相机内参,TUM Freiburg2 Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1); //-- 把匹配点转换为vector<Point2f>的形式 vector<Point2f> points1; vector<Point2f> points2; for (int i = 0; i < (int) matches.size(); i++) { points1.push_back(keypoints_1[matches[i].queryIdx].pt); points2.push_back(keypoints_2[matches[i].trainIdx].pt); } //-- 计算基础矩阵 Mat fundamental_matrix; fundamental_matrix = findFundamentalMat(points1, points2, CV_FM_8POINT); cout << "fundamental_matrix is " << endl << fundamental_matrix << endl; //-- 计算本质矩阵 Point2d principal_point(325.1, 249.7); //相机光心, TUM dataset标定值 double focal_length = 521; //相机焦距, TUM dataset标定值 Mat essential_matrix; essential_matrix = findEssentialMat(points1, points2, focal_length, principal_point); cout << "essential_matrix is " << endl << essential_matrix << endl; //-- 计算单应矩阵 //-- 但是本例中场景不是平面,单应矩阵意义不大 Mat homography_matrix; homography_matrix = findHomography(points1, points2, RANSAC, 3); cout << "homography_matrix is " << endl << homography_matrix << endl; //-- 从本质矩阵中恢复旋转和平移信息. // 此函数仅在Opencv3中提供 recoverPose(essential_matrix, points1, points2, R, t, focal_length, principal_point); cout << "R is " << endl << R << endl; cout << "t is " << endl << t << endl; }

复制

#include <iostream> #include <opencv2/core/core.hpp> #include <opencv2/features2d/features2d.hpp> #include <opencv2/highgui/highgui.hpp> #include <opencv2/calib3d/calib3d.hpp> // #include "extra.h" // use this if in OpenCV2 using namespace std; using namespace cv; /**************************************************** * 本程序演示了如何使用2D-2D的特征匹配估计相机运动 * **************************************************/ void find_feature_matches( const Mat &img_1, const Mat &img_2, std::vector<KeyPoint> &keypoints_1, std::vector<KeyPoint> &keypoints_2, std::vector<DMatch> &matches); void pose_estimation_2d2d( std::vector<KeyPoint> keypoints_1, std::vector<KeyPoint> keypoints_2, std::vector<DMatch> matches, Mat &R, Mat &t); // 像素坐标转相机归一化坐标 Point2d pixel2cam(const Point2d &p, const Mat &K); int main(int argc, char **argv) { if (argc != 3) { cout << "usage: pose_estimation_2d2d img1 img2" << endl; return 1; } //-- 读取图像 Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR); Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR); assert(img_1.data && img_2.data && "Can not load images!"); vector<KeyPoint> keypoints_1, keypoints_2; vector<DMatch> matches; find_feature_matches(img_1, img_2, keypoints_1, keypoints_2, matches); cout << "一共找到了" << matches.size() << "组匹配点" << endl; //-- 估计两张图像间运动 Mat R, t; pose_estimation_2d2d(keypoints_1, keypoints_2, matches, R, t); //-- 验证E=t^R*scale Mat t_x = (Mat_<double>(3, 3) << 0, -t.at<double>(2, 0), t.at<double>(1, 0), t.at<double>(2, 0), 0, -t.at<double>(0, 0), -t.at<double>(1, 0), t.at<double>(0, 0), 0); cout << "t^R=" << endl << t_x * R << endl; //-- 验证对极约束 Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1); for (DMatch m: matches) { Point2d pt1 = pixel2cam(keypoints_1[m.queryIdx].pt, K); Mat y1 = (Mat_<double>(3, 1) << pt1.x, pt1.y, 1); Point2d pt2 = pixel2cam(keypoints_2[m.trainIdx].pt, K); Mat y2 = (Mat_<double>(3, 1) << pt2.x, pt2.y, 1); Mat d = y2.t() * t_x * R * y1; cout << "epipolar constraint = " << d << endl; } return 0; } void find_feature_matches(const Mat &img_1, const Mat &img_2, std::vector<KeyPoint> &keypoints_1, std::vector<KeyPoint> &keypoints_2, std::vector<DMatch> &matches) { //-- 初始化 Mat descriptors_1, descriptors_2; // used in OpenCV3 Ptr<FeatureDetector> detector = ORB::create(); Ptr<DescriptorExtractor> descriptor = ORB::create(); // use this if you are in OpenCV2 // Ptr<FeatureDetector> detector = FeatureDetector::create ( "ORB" ); // Ptr<DescriptorExtractor> descriptor = DescriptorExtractor::create ( "ORB" ); Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming"); //-- 第一步:检测 Oriented FAST 角点位置 detector->detect(img_1, keypoints_1); detector->detect(img_2, keypoints_2); //-- 第二步:根据角点位置计算 BRIEF 描述子 descriptor->compute(img_1, keypoints_1, descriptors_1); descriptor->compute(img_2, keypoints_2, descriptors_2); //-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离 vector<DMatch> match; //BFMatcher matcher ( NORM_HAMMING ); matcher->match(descriptors_1, descriptors_2, match); //-- 第四步:匹配点对筛选 double min_dist = 10000, max_dist = 0; //找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离 for (int i = 0; i < descriptors_1.rows; i++) { double dist = match[i].distance; if (dist < min_dist) min_dist = dist; if (dist > max_dist) max_dist = dist; } printf("-- Max dist : %f \n", max_dist); printf("-- Min dist : %f \n", min_dist); //当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限. for (int i = 0; i < descriptors_1.rows; i++) { if (match[i].distance <= max(2 * min_dist, 30.0)) { matches.push_back(match[i]); } } } Point2d pixel2cam(const Point2d &p, const Mat &K) { return Point2d ( (p.x - K.at<double>(0, 2)) / K.at<double>(0, 0), (p.y - K.at<double>(1, 2)) / K.at<double>(1, 1) ); } void pose_estimation_2d2d(std::vector<KeyPoint> keypoints_1, std::vector<KeyPoint> keypoints_2, std::vector<DMatch> matches, Mat &R, Mat &t) { // 相机内参,TUM Freiburg2 Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1); //-- 把匹配点转换为vector<Point2f>的形式 vector<Point2f> points1; vector<Point2f> points2; for (int i = 0; i < (int) matches.size(); i++) { points1.push_back(keypoints_1[matches[i].queryIdx].pt); points2.push_back(keypoints_2[matches[i].trainIdx].pt); } //-- 计算基础矩阵 Mat fundamental_matrix; fundamental_matrix = findFundamentalMat(points1, points2, CV_FM_8POINT); cout << "fundamental_matrix is " << endl << fundamental_matrix << endl; //-- 计算本质矩阵 Point2d principal_point(325.1, 249.7); //相机光心, TUM dataset标定值 double focal_length = 521; //相机焦距, TUM dataset标定值 Mat essential_matrix; essential_matrix = findEssentialMat(points1, points2, focal_length, principal_point); cout << "essential_matrix is " << endl << essential_matrix << endl; //-- 计算单应矩阵 //-- 但是本例中场景不是平面,单应矩阵意义不大 Mat homography_matrix; homography_matrix = findHomography(points1, points2, RANSAC, 3); cout << "homography_matrix is " << endl << homography_matrix << endl; //-- 从本质矩阵中恢复旋转和平移信息. // 此函数仅在Opencv3中提供 recoverPose(essential_matrix, points1, points2, R, t, focal_length, principal_point); cout << "R is " << endl << R << endl; cout << "t is " << endl << t << endl; }

复制

复制

复制

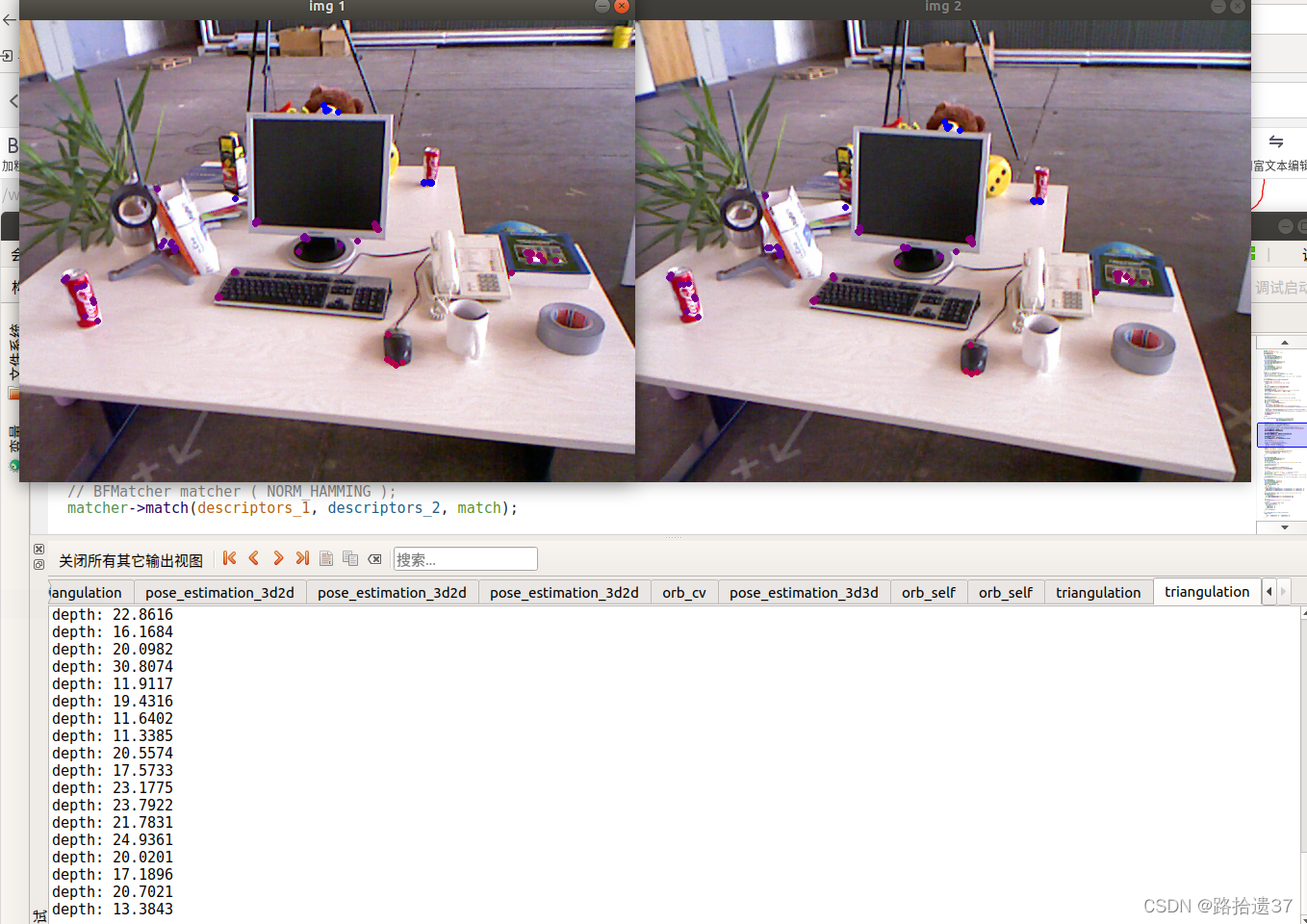

7.5 三角测量

编译运行代码结果如下:部分类的调用没能明白,轻置小坑)

部分类的调用没能明白,轻置小坑

#include <iostream>

#include <opencv2/opencv.hpp>

// #include "extra.h" // used in opencv2

using namespace std;

using namespace cv;

void find_feature_matches(

const Mat &img_1, const Mat &img_2,

std::vector<KeyPoint> &keypoints_1,

std::vector<KeyPoint> &keypoints_2,

std::vector<DMatch> &matches);

void pose_estimation_2d2d(

const std::vector<KeyPoint> &keypoints_1,

const std::vector<KeyPoint> &keypoints_2,

const std::vector<DMatch> &matches,

Mat &R, Mat &t);

void triangulation(

const vector<KeyPoint> &keypoint_1,

const vector<KeyPoint> &keypoint_2,

const std::vector<DMatch> &matches,

const Mat &R, const Mat &t,

vector<Point3d> &points

);

/// 作图用

inline cv::Scalar get_color(float depth) {

float up_th = 50, low_th = 10, th_range = up_th - low_th;

if (depth > up_th) depth = up_th;

if (depth < low_th) depth = low_th;

return cv::Scalar(255 * depth / th_range, 0, 255 * (1 - depth / th_range));

}

// 像素坐标转相机归一化坐标

Point2f pixel2cam(const Point2d &p, const Mat &K);

int main(int argc, char **argv) {

if (argc != 3) {

cout << "usage: triangulation img1 img2" << endl;

return 1;

}

//-- 读取图像

Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR);

Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR);

vector<KeyPoint> keypoints_1, keypoints_2;

vector<DMatch> matches;

find_feature_matches(img_1, img_2, keypoints_1, keypoints_2, matches);

cout << "一共找到了" << matches.size() << "组匹配点" << endl;

//-- 估计两张图像间运动

Mat R, t;

pose_estimation_2d2d(keypoints_1, keypoints_2, matches, R, t);

//-- 三角化

vector<Point3d> points;

triangulation(keypoints_1, keypoints_2, matches, R, t, points);

//-- 验证三角化点与特征点的重投影关系

Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1);

Mat img1_plot = img_1.clone();

Mat img2_plot = img_2.clone();

for (int i = 0; i < matches.size(); i++) {

// 第一个图

float depth1 = points[i].z;

cout << "depth: " << depth1 << endl;

Point2d pt1_cam = pixel2cam(keypoints_1[matches[i].queryIdx].pt, K);

cv::circle(img1_plot, keypoints_1[matches[i].queryIdx].pt, 2, get_color(depth1), 2);

// 第二个图

Mat pt2_trans = R * (Mat_<double>(3, 1) << points[i].x, points[i].y, points[i].z) + t;

float depth2 = pt2_trans.at<double>(2, 0);

cv::circle(img2_plot, keypoints_2[matches[i].trainIdx].pt, 2, get_color(depth2), 2);

}

cv::imshow("img 1", img1_plot);

cv::imshow("img 2", img2_plot);

cv::waitKey();

return 0;

}

void find_feature_matches(const Mat &img_1, const Mat &img_2,

std::vector<KeyPoint> &keypoints_1,

std::vector<KeyPoint> &keypoints_2,

std::vector<DMatch> &matches) {

//-- 初始化

Mat descriptors_1, descriptors_2;

// used in OpenCV3

Ptr<FeatureDetector> detector = ORB::create();

Ptr<DescriptorExtractor> descriptor = ORB::create();

// use this if you are in OpenCV2

// Ptr<FeatureDetector> detector = FeatureDetector::create ( "ORB" );

// Ptr<DescriptorExtractor> descriptor = DescriptorExtractor::create ( "ORB" );

Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming");

//-- 第一步:检测 Oriented FAST 角点位置

detector->detect(img_1, keypoints_1);

detector->detect(img_2, keypoints_2);

//-- 第二步:根据角点位置计算 BRIEF 描述子

descriptor->compute(img_1, keypoints_1, descriptors_1);

descriptor->compute(img_2, keypoints_2, descriptors_2);

//-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离

vector<DMatch> match;

// BFMatcher matcher ( NORM_HAMMING );

matcher->match(descriptors_1, descriptors_2, match);

//-- 第四步:匹配点对筛选

double min_dist = 10000, max_dist = 0;

//找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离

for (int i = 0; i < descriptors_1.rows; i++) {

double dist = match[i].distance;

if (dist < min_dist) min_dist = dist;

if (dist > max_dist) max_dist = dist;

}

printf("-- Max dist : %f \n", max_dist);

printf("-- Min dist : %f \n", min_dist);

//当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限.

for (int i = 0; i < descriptors_1.rows; i++) {

if (match[i].distance <= max(2 * min_dist, 30.0)) {

matches.push_back(match[i]);

}

}

}

void pose_estimation_2d2d(

const std::vector<KeyPoint> &keypoints_1,

const std::vector<KeyPoint> &keypoints_2,

const std::vector<DMatch> &matches,

Mat &R, Mat &t) {

// 相机内参,TUM Freiburg2

Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1);

//-- 把匹配点转换为vector<Point2f>的形式

vector<Point2f> points1;

vector<Point2f> points2;

for (int i = 0; i < (int) matches.size(); i++) {

points1.push_back(keypoints_1[matches[i].queryIdx].pt);

points2.push_back(keypoints_2[matches[i].trainIdx].pt);

}

//-- 计算本质矩阵

Point2d principal_point(325.1, 249.7); //相机主点, TUM dataset标定值

int focal_length = 521; //相机焦距, TUM dataset标定值

Mat essential_matrix;

essential_matrix = findEssentialMat(points1, points2, focal_length, principal_point);

//-- 从本质矩阵中恢复旋转和平移信息.

recoverPose(essential_matrix, points1, points2, R, t, focal_length, principal_point);

}

void triangulation( //通过三角化利用对极几何求解的相机位姿求出特征点的空间位置

const vector<KeyPoint> &keypoint_1,

const vector<KeyPoint> &keypoint_2,

const std::vector<DMatch> &matches,

const Mat &R, const Mat &t,

vector<Point3d> &points) {

Mat T1 = (Mat_<float>(3, 4) <<

1, 0, 0, 0,

0, 1, 0, 0,

0, 0, 1, 0);

Mat T2 = (Mat_<float>(3, 4) <<

R.at<double>(0, 0), R.at<double>(0, 1), R.at<double>(0, 2), t.at<double>(0, 0),

R.at<double>(1, 0), R.at<double>(1, 1), R.at<double>(1, 2), t.at<double>(1, 0),

R.at<double>(2, 0), R.at<double>(2, 1), R.at<double>(2, 2), t.at<double>(2, 0)

);

Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1);

vector<Point2f> pts_1, pts_2;

for (DMatch m:matches) {

// 将像素坐标转换至相机坐标

pts_1.push_back(pixel2cam(keypoint_1[m.queryIdx].pt, K));

pts_2.push_back(pixel2cam(keypoint_2[m.trainIdx].pt, K));

}

Mat pts_4d;

cv::triangulatePoints(T1, T2, pts_1, pts_2, pts_4d);

// 转换成非齐次坐标

for (int i = 0; i < pts_4d.cols; i++) {

Mat x = pts_4d.col(i);

x /= x.at<float>(3, 0); // 归一化

Point3d p(

x.at<float>(0, 0),

x.at<float>(1, 0),

x.at<float>(2, 0)

);

points.push_back(p);

}

}

Point2f pixel2cam(const Point2d &p, const Mat &K) {

return Point2f

(

(p.x - K.at<double>(0, 2)) / K.at<double>(0, 0),

(p.y - K.at<double>(1, 2)) / K.at<double>(1, 1)

);

}

#include <iostream> #include <opencv2/opencv.hpp> // #include "extra.h" // used in opencv2 using namespace std; using namespace cv; void find_feature_matches( const Mat &img_1, const Mat &img_2, std::vector<KeyPoint> &keypoints_1, std::vector<KeyPoint> &keypoints_2, std::vector<DMatch> &matches); void pose_estimation_2d2d( const std::vector<KeyPoint> &keypoints_1, const std::vector<KeyPoint> &keypoints_2, const std::vector<DMatch> &matches, Mat &R, Mat &t); void triangulation( const vector<KeyPoint> &keypoint_1, const vector<KeyPoint> &keypoint_2, const std::vector<DMatch> &matches, const Mat &R, const Mat &t, vector<Point3d> &points ); /// 作图用 inline cv::Scalar get_color(float depth) { float up_th = 50, low_th = 10, th_range = up_th - low_th; if (depth > up_th) depth = up_th; if (depth < low_th) depth = low_th; return cv::Scalar(255 * depth / th_range, 0, 255 * (1 - depth / th_range)); } // 像素坐标转相机归一化坐标 Point2f pixel2cam(const Point2d &p, const Mat &K); int main(int argc, char **argv) { if (argc != 3) { cout << "usage: triangulation img1 img2" << endl; return 1; } //-- 读取图像 Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR); Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR); vector<KeyPoint> keypoints_1, keypoints_2; vector<DMatch> matches; find_feature_matches(img_1, img_2, keypoints_1, keypoints_2, matches); cout << "一共找到了" << matches.size() << "组匹配点" << endl; //-- 估计两张图像间运动 Mat R, t; pose_estimation_2d2d(keypoints_1, keypoints_2, matches, R, t); //-- 三角化 vector<Point3d> points; triangulation(keypoints_1, keypoints_2, matches, R, t, points); //-- 验证三角化点与特征点的重投影关系 Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1); Mat img1_plot = img_1.clone(); Mat img2_plot = img_2.clone(); for (int i = 0; i < matches.size(); i++) { // 第一个图 float depth1 = points[i].z; cout << "depth: " << depth1 << endl; Point2d pt1_cam = pixel2cam(keypoints_1[matches[i].queryIdx].pt, K); cv::circle(img1_plot, keypoints_1[matches[i].queryIdx].pt, 2, get_color(depth1), 2); // 第二个图 Mat pt2_trans = R * (Mat_<double>(3, 1) << points[i].x, points[i].y, points[i].z) + t; float depth2 = pt2_trans.at<double>(2, 0); cv::circle(img2_plot, keypoints_2[matches[i].trainIdx].pt, 2, get_color(depth2), 2); } cv::imshow("img 1", img1_plot); cv::imshow("img 2", img2_plot); cv::waitKey(); return 0; } void find_feature_matches(const Mat &img_1, const Mat &img_2, std::vector<KeyPoint> &keypoints_1, std::vector<KeyPoint> &keypoints_2, std::vector<DMatch> &matches) { //-- 初始化 Mat descriptors_1, descriptors_2; // used in OpenCV3 Ptr<FeatureDetector> detector = ORB::create(); Ptr<DescriptorExtractor> descriptor = ORB::create(); // use this if you are in OpenCV2 // Ptr<FeatureDetector> detector = FeatureDetector::create ( "ORB" ); // Ptr<DescriptorExtractor> descriptor = DescriptorExtractor::create ( "ORB" ); Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming"); //-- 第一步:检测 Oriented FAST 角点位置 detector->detect(img_1, keypoints_1); detector->detect(img_2, keypoints_2); //-- 第二步:根据角点位置计算 BRIEF 描述子 descriptor->compute(img_1, keypoints_1, descriptors_1); descriptor->compute(img_2, keypoints_2, descriptors_2); //-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离 vector<DMatch> match; // BFMatcher matcher ( NORM_HAMMING ); matcher->match(descriptors_1, descriptors_2, match); //-- 第四步:匹配点对筛选 double min_dist = 10000, max_dist = 0; //找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离 for (int i = 0; i < descriptors_1.rows; i++) { double dist = match[i].distance; if (dist < min_dist) min_dist = dist; if (dist > max_dist) max_dist = dist; } printf("-- Max dist : %f \n", max_dist); printf("-- Min dist : %f \n", min_dist); //当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限. for (int i = 0; i < descriptors_1.rows; i++) { if (match[i].distance <= max(2 * min_dist, 30.0)) { matches.push_back(match[i]); } } } void pose_estimation_2d2d( const std::vector<KeyPoint> &keypoints_1, const std::vector<KeyPoint> &keypoints_2, const std::vector<DMatch> &matches, Mat &R, Mat &t) { // 相机内参,TUM Freiburg2 Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1); //-- 把匹配点转换为vector<Point2f>的形式 vector<Point2f> points1; vector<Point2f> points2; for (int i = 0; i < (int) matches.size(); i++) { points1.push_back(keypoints_1[matches[i].queryIdx].pt); points2.push_back(keypoints_2[matches[i].trainIdx].pt); } //-- 计算本质矩阵 Point2d principal_point(325.1, 249.7); //相机主点, TUM dataset标定值 int focal_length = 521; //相机焦距, TUM dataset标定值 Mat essential_matrix; essential_matrix = findEssentialMat(points1, points2, focal_length, principal_point); //-- 从本质矩阵中恢复旋转和平移信息. recoverPose(essential_matrix, points1, points2, R, t, focal_length, principal_point); } void triangulation( //通过三角化利用对极几何求解的相机位姿求出特征点的空间位置 const vector<KeyPoint> &keypoint_1, const vector<KeyPoint> &keypoint_2, const std::vector<DMatch> &matches, const Mat &R, const Mat &t, vector<Point3d> &points) { Mat T1 = (Mat_<float>(3, 4) << 1, 0, 0, 0, 0, 1, 0, 0, 0, 0, 1, 0); Mat T2 = (Mat_<float>(3, 4) << R.at<double>(0, 0), R.at<double>(0, 1), R.at<double>(0, 2), t.at<double>(0, 0), R.at<double>(1, 0), R.at<double>(1, 1), R.at<double>(1, 2), t.at<double>(1, 0), R.at<double>(2, 0), R.at<double>(2, 1), R.at<double>(2, 2), t.at<double>(2, 0) ); Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1); vector<Point2f> pts_1, pts_2; for (DMatch m:matches) { // 将像素坐标转换至相机坐标 pts_1.push_back(pixel2cam(keypoint_1[m.queryIdx].pt, K)); pts_2.push_back(pixel2cam(keypoint_2[m.trainIdx].pt, K)); } Mat pts_4d; cv::triangulatePoints(T1, T2, pts_1, pts_2, pts_4d); // 转换成非齐次坐标 for (int i = 0; i < pts_4d.cols; i++) { Mat x = pts_4d.col(i); x /= x.at<float>(3, 0); // 归一化 Point3d p( x.at<float>(0, 0), x.at<float>(1, 0), x.at<float>(2, 0) ); points.push_back(p); } } Point2f pixel2cam(const Point2d &p, const Mat &K) { return Point2f ( (p.x - K.at<double>(0, 2)) / K.at<double>(0, 0), (p.y - K.at<double>(1, 2)) / K.at<double>(1, 1) ); }

#include <iostream> #include <opencv2/opencv.hpp> // #include "extra.h" // used in opencv2 using namespace std; using namespace cv; void find_feature_matches( const Mat &img_1, const Mat &img_2, std::vector<KeyPoint> &keypoints_1, std::vector<KeyPoint> &keypoints_2, std::vector<DMatch> &matches); void pose_estimation_2d2d( const std::vector<KeyPoint> &keypoints_1, const std::vector<KeyPoint> &keypoints_2, const std::vector<DMatch> &matches, Mat &R, Mat &t); void triangulation( const vector<KeyPoint> &keypoint_1, const vector<KeyPoint> &keypoint_2, const std::vector<DMatch> &matches, const Mat &R, const Mat &t, vector<Point3d> &points ); /// 作图用 inline cv::Scalar get_color(float depth) { float up_th = 50, low_th = 10, th_range = up_th - low_th; if (depth > up_th) depth = up_th; if (depth < low_th) depth = low_th; return cv::Scalar(255 * depth / th_range, 0, 255 * (1 - depth / th_range)); } // 像素坐标转相机归一化坐标 Point2f pixel2cam(const Point2d &p, const Mat &K); int main(int argc, char **argv) { if (argc != 3) { cout << "usage: triangulation img1 img2" << endl; return 1; } //-- 读取图像 Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR); Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR); vector<KeyPoint> keypoints_1, keypoints_2; vector<DMatch> matches; find_feature_matches(img_1, img_2, keypoints_1, keypoints_2, matches); cout << "一共找到了" << matches.size() << "组匹配点" << endl; //-- 估计两张图像间运动 Mat R, t; pose_estimation_2d2d(keypoints_1, keypoints_2, matches, R, t); //-- 三角化 vector<Point3d> points; triangulation(keypoints_1, keypoints_2, matches, R, t, points); //-- 验证三角化点与特征点的重投影关系 Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1); Mat img1_plot = img_1.clone(); Mat img2_plot = img_2.clone(); for (int i = 0; i < matches.size(); i++) { // 第一个图 float depth1 = points[i].z; cout << "depth: " << depth1 << endl; Point2d pt1_cam = pixel2cam(keypoints_1[matches[i].queryIdx].pt, K); cv::circle(img1_plot, keypoints_1[matches[i].queryIdx].pt, 2, get_color(depth1), 2); // 第二个图 Mat pt2_trans = R * (Mat_<double>(3, 1) << points[i].x, points[i].y, points[i].z) + t; float depth2 = pt2_trans.at<double>(2, 0); cv::circle(img2_plot, keypoints_2[matches[i].trainIdx].pt, 2, get_color(depth2), 2); } cv::imshow("img 1", img1_plot); cv::imshow("img 2", img2_plot); cv::waitKey(); return 0; } void find_feature_matches(const Mat &img_1, const Mat &img_2, std::vector<KeyPoint> &keypoints_1, std::vector<KeyPoint> &keypoints_2, std::vector<DMatch> &matches) { //-- 初始化 Mat descriptors_1, descriptors_2; // used in OpenCV3 Ptr<FeatureDetector> detector = ORB::create(); Ptr<DescriptorExtractor> descriptor = ORB::create(); // use this if you are in OpenCV2 // Ptr<FeatureDetector> detector = FeatureDetector::create ( "ORB" ); // Ptr<DescriptorExtractor> descriptor = DescriptorExtractor::create ( "ORB" ); Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming"); //-- 第一步:检测 Oriented FAST 角点位置 detector->detect(img_1, keypoints_1); detector->detect(img_2, keypoints_2); //-- 第二步:根据角点位置计算 BRIEF 描述子 descriptor->compute(img_1, keypoints_1, descriptors_1); descriptor->compute(img_2, keypoints_2, descriptors_2); //-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离 vector<DMatch> match; // BFMatcher matcher ( NORM_HAMMING ); matcher->match(descriptors_1, descriptors_2, match); //-- 第四步:匹配点对筛选 double min_dist = 10000, max_dist = 0; //找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离 for (int i = 0; i < descriptors_1.rows; i++) { double dist = match[i].distance; if (dist < min_dist) min_dist = dist; if (dist > max_dist) max_dist = dist; } printf("-- Max dist : %f \n", max_dist); printf("-- Min dist : %f \n", min_dist); //当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限. for (int i = 0; i < descriptors_1.rows; i++) { if (match[i].distance <= max(2 * min_dist, 30.0)) { matches.push_back(match[i]); } } } void pose_estimation_2d2d( const std::vector<KeyPoint> &keypoints_1, const std::vector<KeyPoint> &keypoints_2, const std::vector<DMatch> &matches, Mat &R, Mat &t) { // 相机内参,TUM Freiburg2 Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1); //-- 把匹配点转换为vector<Point2f>的形式 vector<Point2f> points1; vector<Point2f> points2; for (int i = 0; i < (int) matches.size(); i++) { points1.push_back(keypoints_1[matches[i].queryIdx].pt); points2.push_back(keypoints_2[matches[i].trainIdx].pt); } //-- 计算本质矩阵 Point2d principal_point(325.1, 249.7); //相机主点, TUM dataset标定值 int focal_length = 521; //相机焦距, TUM dataset标定值 Mat essential_matrix; essential_matrix = findEssentialMat(points1, points2, focal_length, principal_point); //-- 从本质矩阵中恢复旋转和平移信息. recoverPose(essential_matrix, points1, points2, R, t, focal_length, principal_point); } void triangulation( //通过三角化利用对极几何求解的相机位姿求出特征点的空间位置 const vector<KeyPoint> &keypoint_1, const vector<KeyPoint> &keypoint_2, const std::vector<DMatch> &matches, const Mat &R, const Mat &t, vector<Point3d> &points) { Mat T1 = (Mat_<float>(3, 4) << 1, 0, 0, 0, 0, 1, 0, 0, 0, 0, 1, 0); Mat T2 = (Mat_<float>(3, 4) << R.at<double>(0, 0), R.at<double>(0, 1), R.at<double>(0, 2), t.at<double>(0, 0), R.at<double>(1, 0), R.at<double>(1, 1), R.at<double>(1, 2), t.at<double>(1, 0), R.at<double>(2, 0), R.at<double>(2, 1), R.at<double>(2, 2), t.at<double>(2, 0) ); Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1); vector<Point2f> pts_1, pts_2; for (DMatch m:matches) { // 将像素坐标转换至相机坐标 pts_1.push_back(pixel2cam(keypoint_1[m.queryIdx].pt, K)); pts_2.push_back(pixel2cam(keypoint_2[m.trainIdx].pt, K)); } Mat pts_4d; cv::triangulatePoints(T1, T2, pts_1, pts_2, pts_4d); // 转换成非齐次坐标 for (int i = 0; i < pts_4d.cols; i++) { Mat x = pts_4d.col(i); x /= x.at<float>(3, 0); // 归一化 Point3d p( x.at<float>(0, 0), x.at<float>(1, 0), x.at<float>(2, 0) ); points.push_back(p); } } Point2f pixel2cam(const Point2d &p, const Mat &K) { return Point2f ( (p.x - K.at<double>(0, 2)) / K.at<double>(0, 0), (p.y - K.at<double>(1, 2)) / K.at<double>(1, 1) ); }

复制

#include <iostream> #include <opencv2/opencv.hpp> // #include "extra.h" // used in opencv2 using namespace std; using namespace cv; void find_feature_matches( const Mat &img_1, const Mat &img_2, std::vector<KeyPoint> &keypoints_1, std::vector<KeyPoint> &keypoints_2, std::vector<DMatch> &matches); void pose_estimation_2d2d( const std::vector<KeyPoint> &keypoints_1, const std::vector<KeyPoint> &keypoints_2, const std::vector<DMatch> &matches, Mat &R, Mat &t); void triangulation( const vector<KeyPoint> &keypoint_1, const vector<KeyPoint> &keypoint_2, const std::vector<DMatch> &matches, const Mat &R, const Mat &t, vector<Point3d> &points ); /// 作图用 inline cv::Scalar get_color(float depth) { float up_th = 50, low_th = 10, th_range = up_th - low_th; if (depth > up_th) depth = up_th; if (depth < low_th) depth = low_th; return cv::Scalar(255 * depth / th_range, 0, 255 * (1 - depth / th_range)); } // 像素坐标转相机归一化坐标 Point2f pixel2cam(const Point2d &p, const Mat &K); int main(int argc, char **argv) { if (argc != 3) { cout << "usage: triangulation img1 img2" << endl; return 1; } //-- 读取图像 Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR); Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR); vector<KeyPoint> keypoints_1, keypoints_2; vector<DMatch> matches; find_feature_matches(img_1, img_2, keypoints_1, keypoints_2, matches); cout << "一共找到了" << matches.size() << "组匹配点" << endl; //-- 估计两张图像间运动 Mat R, t; pose_estimation_2d2d(keypoints_1, keypoints_2, matches, R, t); //-- 三角化 vector<Point3d> points; triangulation(keypoints_1, keypoints_2, matches, R, t, points); //-- 验证三角化点与特征点的重投影关系 Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1); Mat img1_plot = img_1.clone(); Mat img2_plot = img_2.clone(); for (int i = 0; i < matches.size(); i++) { // 第一个图 float depth1 = points[i].z; cout << "depth: " << depth1 << endl; Point2d pt1_cam = pixel2cam(keypoints_1[matches[i].queryIdx].pt, K); cv::circle(img1_plot, keypoints_1[matches[i].queryIdx].pt, 2, get_color(depth1), 2); // 第二个图 Mat pt2_trans = R * (Mat_<double>(3, 1) << points[i].x, points[i].y, points[i].z) + t; float depth2 = pt2_trans.at<double>(2, 0); cv::circle(img2_plot, keypoints_2[matches[i].trainIdx].pt, 2, get_color(depth2), 2); } cv::imshow("img 1", img1_plot); cv::imshow("img 2", img2_plot); cv::waitKey(); return 0; } void find_feature_matches(const Mat &img_1, const Mat &img_2, std::vector<KeyPoint> &keypoints_1, std::vector<KeyPoint> &keypoints_2, std::vector<DMatch> &matches) { //-- 初始化 Mat descriptors_1, descriptors_2; // used in OpenCV3 Ptr<FeatureDetector> detector = ORB::create(); Ptr<DescriptorExtractor> descriptor = ORB::create(); // use this if you are in OpenCV2 // Ptr<FeatureDetector> detector = FeatureDetector::create ( "ORB" ); // Ptr<DescriptorExtractor> descriptor = DescriptorExtractor::create ( "ORB" ); Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming"); //-- 第一步:检测 Oriented FAST 角点位置 detector->detect(img_1, keypoints_1); detector->detect(img_2, keypoints_2); //-- 第二步:根据角点位置计算 BRIEF 描述子 descriptor->compute(img_1, keypoints_1, descriptors_1); descriptor->compute(img_2, keypoints_2, descriptors_2); //-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离 vector<DMatch> match; // BFMatcher matcher ( NORM_HAMMING ); matcher->match(descriptors_1, descriptors_2, match); //-- 第四步:匹配点对筛选 double min_dist = 10000, max_dist = 0; //找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离 for (int i = 0; i < descriptors_1.rows; i++) { double dist = match[i].distance; if (dist < min_dist) min_dist = dist; if (dist > max_dist) max_dist = dist; } printf("-- Max dist : %f \n", max_dist); printf("-- Min dist : %f \n", min_dist); //当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限. for (int i = 0; i < descriptors_1.rows; i++) { if (match[i].distance <= max(2 * min_dist, 30.0)) { matches.push_back(match[i]); } } } void pose_estimation_2d2d( const std::vector<KeyPoint> &keypoints_1, const std::vector<KeyPoint> &keypoints_2, const std::vector<DMatch> &matches, Mat &R, Mat &t) { // 相机内参,TUM Freiburg2 Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1); //-- 把匹配点转换为vector<Point2f>的形式 vector<Point2f> points1; vector<Point2f> points2; for (int i = 0; i < (int) matches.size(); i++) { points1.push_back(keypoints_1[matches[i].queryIdx].pt); points2.push_back(keypoints_2[matches[i].trainIdx].pt); } //-- 计算本质矩阵 Point2d principal_point(325.1, 249.7); //相机主点, TUM dataset标定值 int focal_length = 521; //相机焦距, TUM dataset标定值 Mat essential_matrix; essential_matrix = findEssentialMat(points1, points2, focal_length, principal_point); //-- 从本质矩阵中恢复旋转和平移信息. recoverPose(essential_matrix, points1, points2, R, t, focal_length, principal_point); } void triangulation( //通过三角化利用对极几何求解的相机位姿求出特征点的空间位置 const vector<KeyPoint> &keypoint_1, const vector<KeyPoint> &keypoint_2, const std::vector<DMatch> &matches, const Mat &R, const Mat &t, vector<Point3d> &points) { Mat T1 = (Mat_<float>(3, 4) << 1, 0, 0, 0, 0, 1, 0, 0, 0, 0, 1, 0); Mat T2 = (Mat_<float>(3, 4) << R.at<double>(0, 0), R.at<double>(0, 1), R.at<double>(0, 2), t.at<double>(0, 0), R.at<double>(1, 0), R.at<double>(1, 1), R.at<double>(1, 2), t.at<double>(1, 0), R.at<double>(2, 0), R.at<double>(2, 1), R.at<double>(2, 2), t.at<double>(2, 0) ); Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1); vector<Point2f> pts_1, pts_2; for (DMatch m:matches) { // 将像素坐标转换至相机坐标 pts_1.push_back(pixel2cam(keypoint_1[m.queryIdx].pt, K)); pts_2.push_back(pixel2cam(keypoint_2[m.trainIdx].pt, K)); } Mat pts_4d; cv::triangulatePoints(T1, T2, pts_1, pts_2, pts_4d); // 转换成非齐次坐标 for (int i = 0; i < pts_4d.cols; i++) { Mat x = pts_4d.col(i); x /= x.at<float>(3, 0); // 归一化 Point3d p( x.at<float>(0, 0), x.at<float>(1, 0), x.at<float>(2, 0) ); points.push_back(p); } } Point2f pixel2cam(const Point2d &p, const Mat &K) { return Point2f ( (p.x - K.at<double>(0, 2)) / K.at<double>(0, 0), (p.y - K.at<double>(1, 2)) / K.at<double>(1, 1) ); }

复制

复制

复制

7.7 3D-2D: PnP

编译运行代码结果如下:

[ INFO:0] Initialize OpenCL runtime...

-- Max dist : 95.000000

-- Min dist : 7.000000

一共找到了81组匹配点

3d-2d pairs: 77

solve pnp in opencv cost time: 0.0142977 seconds.

R=

[0.9979193252225095, -0.05138618904650329, 0.03894200717385431;

0.05033852907733768, 0.9983556574295407, 0.0274228694479559;

-0.04028712992732943, -0.02540552801471818, 0.998865109165653]

t=

[-0.1255867099750184;

-0.007363525258815287;

0.06099926588678122]

calling bundle adjustment by gauss newton

iteration 0 cost=44765.3537799

iteration 1 cost=431.695366816

iteration 2 cost=319.560037493

iteration 3 cost=319.55886789

pose by g-n:

0.997919325221 -0.0513861890122 0.0389420072614 -0.125586710123

0.0503385290405 0.998355657431 0.0274228694545 -0.00736352527141

-0.0402871300142 -0.0254055280183 0.998865109162 0.0609992659219

0 0 0 1

solve pnp by gauss newton cost time: 9.4266e-05 seconds.

calling bundle adjustment by g2o

iteration= 0 chi2= 431.695367 time= 2.3349e-05 cumTime= 2.3349e-05 edges= 77 schur= 0

iteration= 1 chi2= 319.560037 time= 1.0797e-05 cumTime= 3.4146e-05 edges= 77 schur= 0

iteration= 2 chi2= 319.558868 time= 1.0022e-05 cumTime= 4.4168e-05 edges= 77 schur= 0

iteration= 3 chi2= 319.558868 time= 9.89402e-06 cumTime= 5.4062e-05 edges= 77 schur= 0

iteration= 4 chi2= 319.558868 time= 9.83902e-06 cumTime= 6.3901e-05 edges= 77 schur= 0

iteration= 5 chi2= 319.558868 time= 9.86701e-06 cumTime= 7.3768e-05 edges= 77 schur= 0

iteration= 6 chi2= 319.558868 time= 9.80101e-06 cumTime= 8.3569e-05 edges= 77 schur= 0

iteration= 7 chi2= 319.558868 time= 9.78299e-06 cumTime= 9.3352e-05 edges= 77 schur= 0

iteration= 8 chi2= 319.558868 time= 9.785e-06 cumTime= 0.000103137 edges= 77 schur= 0

iteration= 9 chi2= 319.558868 time= 9.765e-06 cumTime= 0.000112902 edges= 77 schur= 0

optimization costs time: 0.000419886 seconds.

pose estimated by g2o =

0.997919325223 -0.0513861890471 0.0389420071732 -0.125586709974

0.0503385290779 0.998355657429 0.0274228694493 -0.007363525261

-0.0402871299268 -0.0254055280161 0.998865109166 0.0609992658862

0 0 0 1

solve pnp by g2o cost time: 0.000538141 seconds.

*** 正常退出 ***

[ INFO:0] Initialize OpenCL runtime... -- Max dist : 95.000000 -- Min dist : 7.000000 一共找到了81组匹配点 3d-2d pairs: 77 solve pnp in opencv cost time: 0.0142977 seconds. R= [0.9979193252225095, -0.05138618904650329, 0.03894200717385431; 0.05033852907733768, 0.9983556574295407, 0.0274228694479559; -0.04028712992732943, -0.02540552801471818, 0.998865109165653] t= [-0.1255867099750184; -0.007363525258815287; 0.06099926588678122] calling bundle adjustment by gauss newton iteration 0 cost=44765.3537799 iteration 1 cost=431.695366816 iteration 2 cost=319.560037493 iteration 3 cost=319.55886789 pose by g-n: 0.997919325221 -0.0513861890122 0.0389420072614 -0.125586710123 0.0503385290405 0.998355657431 0.0274228694545 -0.00736352527141 -0.0402871300142 -0.0254055280183 0.998865109162 0.0609992659219 0 0 0 1 solve pnp by gauss newton cost time: 9.4266e-05 seconds. calling bundle adjustment by g2o iteration= 0 chi2= 431.695367 time= 2.3349e-05 cumTime= 2.3349e-05 edges= 77 schur= 0 iteration= 1 chi2= 319.560037 time= 1.0797e-05 cumTime= 3.4146e-05 edges= 77 schur= 0 iteration= 2 chi2= 319.558868 time= 1.0022e-05 cumTime= 4.4168e-05 edges= 77 schur= 0 iteration= 3 chi2= 319.558868 time= 9.89402e-06 cumTime= 5.4062e-05 edges= 77 schur= 0 iteration= 4 chi2= 319.558868 time= 9.83902e-06 cumTime= 6.3901e-05 edges= 77 schur= 0 iteration= 5 chi2= 319.558868 time= 9.86701e-06 cumTime= 7.3768e-05 edges= 77 schur= 0 iteration= 6 chi2= 319.558868 time= 9.80101e-06 cumTime= 8.3569e-05 edges= 77 schur= 0 iteration= 7 chi2= 319.558868 time= 9.78299e-06 cumTime= 9.3352e-05 edges= 77 schur= 0 iteration= 8 chi2= 319.558868 time= 9.785e-06 cumTime= 0.000103137 edges= 77 schur= 0 iteration= 9 chi2= 319.558868 time= 9.765e-06 cumTime= 0.000112902 edges= 77 schur= 0 optimization costs time: 0.000419886 seconds. pose estimated by g2o = 0.997919325223 -0.0513861890471 0.0389420071732 -0.125586709974 0.0503385290779 0.998355657429 0.0274228694493 -0.007363525261 -0.0402871299268 -0.0254055280161 0.998865109166 0.0609992658862 0 0 0 1 solve pnp by g2o cost time: 0.000538141 seconds. *** 正常退出 ***

[ INFO:0] Initialize OpenCL runtime... -- Max dist : 95.000000 -- Min dist : 7.000000 一共找到了81组匹配点 3d-2d pairs: 77 solve pnp in opencv cost time: 0.0142977 seconds. R= [0.9979193252225095, -0.05138618904650329, 0.03894200717385431; 0.05033852907733768, 0.9983556574295407, 0.0274228694479559; -0.04028712992732943, -0.02540552801471818, 0.998865109165653] t= [-0.1255867099750184; -0.007363525258815287; 0.06099926588678122] calling bundle adjustment by gauss newton iteration 0 cost=44765.3537799 iteration 1 cost=431.695366816 iteration 2 cost=319.560037493 iteration 3 cost=319.55886789 pose by g-n: 0.997919325221 -0.0513861890122 0.0389420072614 -0.125586710123 0.0503385290405 0.998355657431 0.0274228694545 -0.00736352527141 -0.0402871300142 -0.0254055280183 0.998865109162 0.0609992659219 0 0 0 1 solve pnp by gauss newton cost time: 9.4266e-05 seconds. calling bundle adjustment by g2o iteration= 0 chi2= 431.695367 time= 2.3349e-05 cumTime= 2.3349e-05 edges= 77 schur= 0 iteration= 1 chi2= 319.560037 time= 1.0797e-05 cumTime= 3.4146e-05 edges= 77 schur= 0 iteration= 2 chi2= 319.558868 time= 1.0022e-05 cumTime= 4.4168e-05 edges= 77 schur= 0 iteration= 3 chi2= 319.558868 time= 9.89402e-06 cumTime= 5.4062e-05 edges= 77 schur= 0 iteration= 4 chi2= 319.558868 time= 9.83902e-06 cumTime= 6.3901e-05 edges= 77 schur= 0 iteration= 5 chi2= 319.558868 time= 9.86701e-06 cumTime= 7.3768e-05 edges= 77 schur= 0 iteration= 6 chi2= 319.558868 time= 9.80101e-06 cumTime= 8.3569e-05 edges= 77 schur= 0 iteration= 7 chi2= 319.558868 time= 9.78299e-06 cumTime= 9.3352e-05 edges= 77 schur= 0 iteration= 8 chi2= 319.558868 time= 9.785e-06 cumTime= 0.000103137 edges= 77 schur= 0 iteration= 9 chi2= 319.558868 time= 9.765e-06 cumTime= 0.000112902 edges= 77 schur= 0 optimization costs time: 0.000419886 seconds. pose estimated by g2o = 0.997919325223 -0.0513861890471 0.0389420071732 -0.125586709974 0.0503385290779 0.998355657429 0.0274228694493 -0.007363525261 -0.0402871299268 -0.0254055280161 0.998865109166 0.0609992658862 0 0 0 1 solve pnp by g2o cost time: 0.000538141 seconds. *** 正常退出 ***

复制

[ INFO:0] Initialize OpenCL runtime... -- Max dist : 95.000000 -- Min dist : 7.000000 一共找到了81组匹配点 3d-2d pairs: 77 solve pnp in opencv cost time: 0.0142977 seconds. R= [0.9979193252225095, -0.05138618904650329, 0.03894200717385431; 0.05033852907733768, 0.9983556574295407, 0.0274228694479559; -0.04028712992732943, -0.02540552801471818, 0.998865109165653] t= [-0.1255867099750184; -0.007363525258815287; 0.06099926588678122] calling bundle adjustment by gauss newton iteration 0 cost=44765.3537799 iteration 1 cost=431.695366816 iteration 2 cost=319.560037493 iteration 3 cost=319.55886789 pose by g-n: 0.997919325221 -0.0513861890122 0.0389420072614 -0.125586710123 0.0503385290405 0.998355657431 0.0274228694545 -0.00736352527141 -0.0402871300142 -0.0254055280183 0.998865109162 0.0609992659219 0 0 0 1 solve pnp by gauss newton cost time: 9.4266e-05 seconds. calling bundle adjustment by g2o iteration= 0 chi2= 431.695367 time= 2.3349e-05 cumTime= 2.3349e-05 edges= 77 schur= 0 iteration= 1 chi2= 319.560037 time= 1.0797e-05 cumTime= 3.4146e-05 edges= 77 schur= 0 iteration= 2 chi2= 319.558868 time= 1.0022e-05 cumTime= 4.4168e-05 edges= 77 schur= 0 iteration= 3 chi2= 319.558868 time= 9.89402e-06 cumTime= 5.4062e-05 edges= 77 schur= 0 iteration= 4 chi2= 319.558868 time= 9.83902e-06 cumTime= 6.3901e-05 edges= 77 schur= 0 iteration= 5 chi2= 319.558868 time= 9.86701e-06 cumTime= 7.3768e-05 edges= 77 schur= 0 iteration= 6 chi2= 319.558868 time= 9.80101e-06 cumTime= 8.3569e-05 edges= 77 schur= 0 iteration= 7 chi2= 319.558868 time= 9.78299e-06 cumTime= 9.3352e-05 edges= 77 schur= 0 iteration= 8 chi2= 319.558868 time= 9.785e-06 cumTime= 0.000103137 edges= 77 schur= 0 iteration= 9 chi2= 319.558868 time= 9.765e-06 cumTime= 0.000112902 edges= 77 schur= 0 optimization costs time: 0.000419886 seconds. pose estimated by g2o = 0.997919325223 -0.0513861890471 0.0389420071732 -0.125586709974 0.0503385290779 0.998355657429 0.0274228694493 -0.007363525261 -0.0402871299268 -0.0254055280161 0.998865109166 0.0609992658862 0 0 0 1 solve pnp by g2o cost time: 0.000538141 seconds. *** 正常退出 ***

复制

复制

复制

代码及注释如下:

1.open CV直接求解

1.open CV直接求解

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

Mat r, t;

solvePnP(pts_3d, pts_2d, K, Mat(), r, t, false); // 调用OpenCV 的 PnP 求解,可选择EPNP,DLS等方法

Mat R;

cv::Rodrigues(r, R); // r为旋转向量形式,用Rodrigues公式转换为矩阵

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "solve pnp in opencv cost time: " << time_used.count() << " seconds." << endl;

cout << "R=" << endl << R << endl;

cout << "t=" << endl << t << endl;

chrono::steady_clock::time_point t1 = chrono::steady_clock::now(); Mat r, t; solvePnP(pts_3d, pts_2d, K, Mat(), r, t, false); // 调用OpenCV 的 PnP 求解,可选择EPNP,DLS等方法 Mat R; cv::Rodrigues(r, R); // r为旋转向量形式,用Rodrigues公式转换为矩阵 chrono::steady_clock::time_point t2 = chrono::steady_clock::now(); chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1); cout << "solve pnp in opencv cost time: " << time_used.count() << " seconds." << endl; cout << "R=" << endl << R << endl; cout << "t=" << endl << t << endl;

chrono::steady_clock::time_point t1 = chrono::steady_clock::now(); Mat r, t; solvePnP(pts_3d, pts_2d, K, Mat(), r, t, false); // 调用OpenCV 的 PnP 求解,可选择EPNP,DLS等方法 Mat R; cv::Rodrigues(r, R); // r为旋转向量形式,用Rodrigues公式转换为矩阵 chrono::steady_clock::time_point t2 = chrono::steady_clock::now(); chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1); cout << "solve pnp in opencv cost time: " << time_used.count() << " seconds." << endl; cout << "R=" << endl << R << endl; cout << "t=" << endl << t << endl;

复制

chrono::steady_clock::time_point t1 = chrono::steady_clock::now(); Mat r, t; solvePnP(pts_3d, pts_2d, K, Mat(), r, t, false); // 调用OpenCV 的 PnP 求解,可选择EPNP,DLS等方法 Mat R; cv::Rodrigues(r, R); // r为旋转向量形式,用Rodrigues公式转换为矩阵 chrono::steady_clock::time_point t2 = chrono::steady_clock::now(); chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1); cout << "solve pnp in opencv cost time: " << time_used.count() << " seconds." << endl; cout << "R=" << endl << R << endl; cout << "t=" << endl << t << endl;

复制

复制

复制

手写位姿估计,先写高斯牛顿法的PnP,然后调用g2o求解

void bundleAdjustmentGaussNewton(

const VecVector3d &points_3d,

const VecVector2d &points_2d,

const Mat &K,

Sophus::SE3d &pose) {

typedef Eigen::Matrix<double, 6, 1> Vector6d;

const int iterations = 10;

double cost = 0, lastCost = 0;

double fx = K.at<double>(0, 0);

double fy = K.at<double>(1, 1);

double cx = K.at<double>(0, 2);

double cy = K.at<double>(1, 2);

for (int iter = 0; iter < iterations; iter++) {

Eigen::Matrix<double, 6, 6> H = Eigen::Matrix<double, 6, 6>::Zero();

Vector6d b = Vector6d::Zero();

cost = 0;

// compute cost

for (int i = 0; i < points_3d.size(); i++) {

Eigen::Vector3d pc = pose * points_3d[i];

double inv_z = 1.0 / pc[2];

double inv_z2 = inv_z * inv_z;

Eigen::Vector2d proj(fx * pc[0] / pc[2] + cx, fy * pc[1] / pc[2] + cy);

Eigen::Vector2d e = points_2d[i] - proj;

cost += e.squaredNorm();

Eigen::Matrix<double, 2, 6> J;

J << -fx * inv_z,

0,

fx * pc[0] * inv_z2,

fx * pc[0] * pc[1] * inv_z2,

-fx - fx * pc[0] * pc[0] * inv_z2,

fx * pc[1] * inv_z,

0,

-fy * inv_z,

fy * pc[1] * inv_z2,

fy + fy * pc[1] * pc[1] * inv_z2,

-fy * pc[0] * pc[1] * inv_z2,

-fy * pc[0] * inv_z;

H += J.transpose() * J;

b += -J.transpose() * e;

}

Vector6d dx;

dx = H.ldlt().solve(b);