1. 系统环境

- Ubuntu 18.04

- 其余的配置与上一篇博客中相同(【图像与点云融合教程(一)】Ubuntu20下配置海康相机和LIVOX AVIA雷达 ),这里不再赘述,请完成上一篇博客的配置工作再进行本篇博客。

2. 安装 Ceres

- 安装一些依赖库:

# CMake sudo apt-get install cmake # google-glog + gflags sudo apt-get install libgoogle-glog-dev libgflags-dev # Use ATLAS for BLAS & LAPACK sudo apt-get install libatlas-base-dev # Eigen3 sudo apt-get install libeigen3-dev # SuiteSparse (optional) sudo apt-get install libsuitesparse-dev - 下载 Ceres 源码:http://ceres-solver.org/ceres-solver-2.1.0.tar.gz

- 编译安装 Ceres:

tar zxf ceres-solver-2.1.0.tar.gz mkdir ceres-bin cd ceres-bin cmake ../ceres-solver-2.1.0 make -j8 make test # Optionally install Ceres, it can also be exported using CMake which # allows Ceres to be used without requiring installation, see the documentation # for the EXPORT_BUILD_DIR option for more information. sudo make install

3. 编译标定源码

- 进入

livox_ros_driver所在工作空间ws_livox/src目录下,下载源码并编译(官方中文文档这里写的不清楚,建议参考英文文档!):git clone https://github.com/Livox-SDK/livox_camera_lidar_calibration.git cd .. # 回到工作空间根目录 catkin_make source devel/setup.bash

4. 相机标定&内参计算

- 打印标定板或使用屏幕显示标定板:原图下载链接

- 使用海康相机拍摄各个角度的bmp格式的标定板图片,存放在

src/livox_camera_lidar_calibration/data/camera/photos下,并将图片名称写入src/livox_camera_lidar_calibration/data/camera/in.txt文件中: - Linux下控制相机拍摄的Python脚本见附录1(主函数中有图像参数和使用按键设置)

python3 mvs_camera_calibration_x64.py

- 在

src/livox_camera_lidar_calibration/launch/cameraCalib.launch中修改图片和文件路径,以及标定板的相关参数(详细见代码注释):

<?xml version="1.0" encoding="UTF-8"?>

<launch>

<param name="camera_in_path" value="$(find camera_lidar_calibration)/data/camera/in.txt" /> <!-- the file to contain all the photos -->

<param name="camera_folder_path" value="$(find camera_lidar_calibration)/data/camera/photos/" /> <!-- the file to contain all the photos -->

<param name="result_path" value="$(find camera_lidar_calibration)/data/camera/result.txt" /> <!-- the file to save the intrinsic data -->

<!-- 交点的个数 -->

<param name="row_number" type="int" value="9" /> <!-- the number of block on the row -->

<param name="col_number" type="int" value="6" /> <!-- the number of block on the column -->

<!-- 格子的大小,单位是mm -->

<param name="width" type="int" value="25" /> <!-- the width of each block on the chessboard(mm) -->

<param name="height" type="int" value="25" /> <!-- the height of each block on the chessboard(mm)-->

<node pkg="camera_lidar_calibration" name="cameraCalib" type="cameraCalib" output="screen"></node>

</launch>

- 执行相机标定脚本(建议先看完本节,使用附录里我修改过的脚本计算相机内参):

source ./devel/setup.sh roslaunch camera_lidar_calibration cameraCalib.launch - 标定的相机参数会被写入

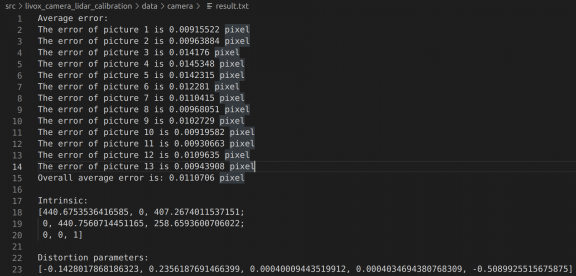

src/livox_camera_lidar_calibration/data/camera/result.txt中,如下图所示:

- 标定结果:

- 一个3x3的内参矩阵(IntrinsicMatrix)

- 5个畸变纠正参数 k1, k2, p1, p2, k3

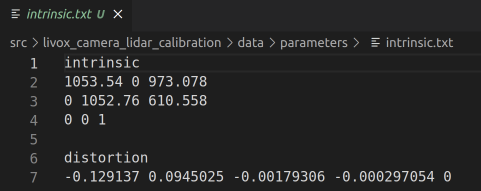

- 将相机的内参和畸变纠正参数以下图的格式保存在

src/livox_camera_lidar_calibration/data/parameters/intrinsic.txt- distortion下面对应5个畸变纠正参数,按顺序是k1和k2 (RadialDistortion),p1和p2 (TangentialDistortion),最后一个是k3,一般默认是0

- 正常通过脚本

src/livox_camera_lidar_calibration/src/cameraCalib.cpp计算出的k3不为 0,这里我修改了该脚本,直接将结果写入intrinsic.txt文件,k3直写为 0(否则畸变纠正存在问题),省去了自己创建文件并按照格式写入数据的繁琐,代码详见附录3。

5. 相机雷达联合标定

5.1 数据采集

- 检查标定板角点是否在照片和点云中:

- 标定场景最好选择一个较为空旷的环境,这样方便识别标定板,并且保证雷达到标定板有3米以上。

- 可以使用低反射率泡棉制作的标定板(1m x 1.5m)(_注:一开始选择的是黑色电视,但是表面存在反射,导致平面上点云强度不均匀,后续换成了表面不反光的白色的桌子,经测试白色的物体也能用,点云不会管物体是什么颜色,图像中也仅仅是标记 4 个角点的位置,用不到 RGB 值_)

roslaunch livox_ros_driver livox_lidar_rviz.launch # 启动雷达 python3 mvs_test_x64.py # 启动相机 - 在这一步根据雷达的 FOV 调整相机照片的分辨率,官方在 Github 上给出的建议是“_在雷达和相机固定之后,选择一个合适的场景同时打开雷达和相机的上位机显示实时的图像,然后根据雷达视角里面的物体直接剪裁相机的FOV,直到它们能包含同样的场景_”。

- 拍摄照片(代码见附录2)+录制点云:

- 选取至少10个左右(_我一共拍摄了 8 张图像和点云,最后也够用_)不同的角度和距离来摆放标定板(参考相机内参的标定物摆放),左右位置和不同的偏向角度最好都有采集数据

- 每个位置保存一张照片和10s左右的rosbag即可

python3 mvs_joint_calibration_x64.py # 按下 t 拍照 roslaunch livox_ros_driver livox_lidar_msg.launch # 启动雷达 # 在根目录下逐级创建用于保存 bag 文件的文件夹 mkdir -p src/livox_camera_lidar_calibration/data/lidar rosbag record -O src/livox_camera_lidar_calibration/data/lidar/0.bag /livox/lidar # 录制点云,输出到指定文件夹下,便于后续操作,文件名从序号 0 开始标

- 数据采集完成后,照片会自动放在

data/photo文件夹下;雷达 rosbag 会自动放在data/lidar文件夹下。

5.2 相机标定数据获取

- 获得照片中的角点坐标,从左上角逆时针开始标 4 个角点(这里我将官方的脚本改写了,从每次只读取一张图片标记角点,改为读取文件夹下所有图片进行标记,代码详见附录4):

roslaunch camera_lidar_calibration cornerMultiPhotosChoose.launch

5.3 雷达标定数据获取

-

运行命令将rosbag批量转为PCD文件,保存在默认路径

data/pcdFiles:roslaunch camera_lidar_calibration pcdTransfer.launch -

获取点云中的角点坐标,从左上角逆时针开始标 4 个角点,按住shift+左键点击得到选中的点坐标,角点顺序与图片一致(从左上角逆时针开始标 4 个角点)。

pcl_viewer -use_point_picking 0.pcd -

将 xyz 坐标保存在 data/corner_lidar.txt 中,格式如下:

5.4 外参计算

-

执行标定脚本(注:参数文件路径需要修改),外参结果以齐次矩阵的格式保存到

data/parameters/extrinsic.txt下:roslaunch camera_lidar_calibration getExt1.launch -

至此,相机雷达联合标定工作已经结束。

5.5 测试

- 投影点云到照片(_注:看不出什么明显的效果_):

roslaunch camera_lidar_calibration projectCloud.launch

- 点云着色:

roslaunch camera_lidar_calibration colorLidar.launch

附录1

- 放置在

src/livox_camera_lidar_calibration/scripts/mvs_camera_calibration_x64.py,代码会逐级创建文件夹src/livox_camera_lidar_calibration/data/camera/photos以保存用于相机标定的照片。

import sys

from ctypes import *

import os

import numpy as np

import time

import cv2

sys.path.append("/opt/MVS/Samples/64/Python/MvImport")

from MvCameraControl_class import *

class HKCamera():

def __init__(self, CameraIdx=0, log_path=None):

# enumerate all the camera devices

deviceList = self.enum_devices()

# generate a camera instance

self.camera = self.open_camera(deviceList, CameraIdx, log_path)

self.start_camera()

def __del__(self):

if self.camera is None:

return

# 停止取流

ret = self.camera.MV_CC_StopGrabbing()

if ret != 0:

raise Exception("stop grabbing fail! ret[0x%x]" % ret)

# 关闭设备

ret = self.camera.MV_CC_CloseDevice()

if ret != 0:

raise Exception("close deivce fail! ret[0x%x]" % ret)

# 销毁句柄

ret = self.camera.MV_CC_DestroyHandle()

if ret != 0:

raise Exception("destroy handle fail! ret[0x%x]" % ret)

@staticmethod

def enum_devices(device=0, device_way=False):

"""

device = 0 枚举网口、USB口、未知设备、cameralink 设备

device = 1 枚举GenTL设备

"""

if device_way == False:

if device == 0:

cameraType = MV_GIGE_DEVICE | MV_USB_DEVICE | MV_UNKNOW_DEVICE | MV_1394_DEVICE | MV_CAMERALINK_DEVICE

deviceList = MV_CC_DEVICE_INFO_LIST()

# 枚举设备

ret = MvCamera.MV_CC_EnumDevices(cameraType, deviceList)

if ret != 0:

raise Exception("enum devices fail! ret[0x%x]" % ret)

return deviceList

else:

pass

elif device_way == True:

pass

def open_camera(self, deviceList, CameraIdx, log_path):

# generate a camera instance

camera = MvCamera()

# 选择设备并创建句柄

stDeviceList = cast(deviceList.pDeviceInfo[CameraIdx], POINTER(MV_CC_DEVICE_INFO)).contents

if log_path is not None:

ret = self.camera.MV_CC_SetSDKLogPath(log_path)

if ret != 0:

raise Exception("set Log path fail! ret[0x%x]" % ret)

# 创建句柄,生成日志

ret = camera.MV_CC_CreateHandle(stDeviceList)

if ret != 0:

raise Exception("create handle fail! ret[0x%x]" % ret)

else:

# 创建句柄,不生成日志

ret = camera.MV_CC_CreateHandleWithoutLog(stDeviceList)

if ret != 0:

raise Exception("create handle fail! ret[0x%x]" % ret)

# 打开相机

ret = camera.MV_CC_OpenDevice(MV_ACCESS_Exclusive, 0)

if ret != 0:

raise Exception("open device fail! ret[0x%x]" % ret)

return camera

def start_camera(self):

stParam = MVCC_INTVALUE()

memset(byref(stParam), 0, sizeof(MVCC_INTVALUE))

ret = self.camera.MV_CC_GetIntValue("PayloadSize", stParam)

if ret != 0:

raise Exception("get payload size fail! ret[0x%x]" % ret)

self.nDataSize = stParam.nCurValue

self.pData = (c_ubyte * self.nDataSize)()

self.stFrameInfo = MV_FRAME_OUT_INFO_EX()

memset(byref(self.stFrameInfo), 0, sizeof(self.stFrameInfo))

self.camera.MV_CC_StartGrabbing()

def get_Value(self, param_type, node_name):

"""

:param cam: 相机实例

:param_type: 获取节点值得类型

:param node_name: 节点名 可选 int 、float 、enum 、bool 、string 型节点

:return: 节点值

"""

if param_type == "int_value":

stParam = MVCC_INTVALUE_EX()

memset(byref(stParam), 0, sizeof(MVCC_INTVALUE_EX))

ret = self.camera.MV_CC_GetIntValueEx(node_name, stParam)

if ret != 0:

raise Exception("获取 int 型数据 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

return stParam.nCurValue

elif param_type == "float_value":

stFloatValue = MVCC_FLOATVALUE()

memset(byref(stFloatValue), 0, sizeof(MVCC_FLOATVALUE))

ret = self.camera.MV_CC_GetFloatValue(node_name, stFloatValue)

if ret != 0:

raise Exception("获取 float 型数据 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

return stFloatValue.fCurValue

elif param_type == "enum_value":

stEnumValue = MVCC_ENUMVALUE()

memset(byref(stEnumValue), 0, sizeof(MVCC_ENUMVALUE))

ret = self.camera.MV_CC_GetEnumValue(node_name, stEnumValue)

if ret != 0:

raise Exception("获取 enum 型数据 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

return stEnumValue.nCurValue

elif param_type == "bool_value":

stBool = c_bool(False)

ret = self.camera.MV_CC_GetBoolValue(node_name, stBool)

if ret != 0:

raise Exception("获取 bool 型数据 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

return stBool.value

elif param_type == "string_value":

stStringValue = MVCC_STRINGVALUE()

memset(byref(stStringValue), 0, sizeof(MVCC_STRINGVALUE))

ret = self.camera.MV_CC_GetStringValue(node_name, stStringValue)

if ret != 0:

raise Exception("获取 string 型数据 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

return stStringValue.chCurValue

else:

return None

def set_Value(self, param_type, node_name, node_value):

"""

:param cam: 相机实例

:param param_type: 需要设置的节点值得类型

int:

float:

enum: 参考于客户端中该选项的 Enum Entry Value 值即可

bool: 对应 0 为关,1 为开

string: 输入值为数字或者英文字符,不能为汉字

:param node_name: 需要设置的节点名

:param node_value: 设置给节点的值

:return:

"""

if param_type == "int_value":

ret = self.camera.MV_CC_SetIntValueEx(node_name, int(node_value))

if ret != 0:

raise Exception("设置 int 型数据节点 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

elif param_type == "float_value":

ret = self.camera.MV_CC_SetFloatValue(node_name, float(node_value))

if ret != 0:

raise Exception("设置 float 型数据节点 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

elif param_type == "enum_value":

ret = self.camera.MV_CC_SetEnumValue(node_name, node_value)

if ret != 0:

raise Exception("设置 enum 型数据节点 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

elif param_type == "bool_value":

ret = self.camera.MV_CC_SetBoolValue(node_name, node_value)

if ret != 0:

raise Exception("设置 bool 型数据节点 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

elif param_type == "string_value":

ret = self.camera.MV_CC_SetStringValue(node_name, str(node_value))

if ret != 0:

raise Exception("设置 string 型数据节点 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

def set_exposure_time(self, exp_time):

self.set_Value(param_type="float_value", node_name="ExposureTime", node_value=exp_time)

def get_exposure_time(self):

return self.get_Value(param_type="float_value", node_name="ExposureTime")

def get_image(self, width=None):

"""

:param cam: 相机实例

:active_way:主动取流方式的不同方法 分别是(getImagebuffer)(getoneframetimeout)

:return:

"""

ret = self.camera.MV_CC_GetOneFrameTimeout(self.pData, self.nDataSize, self.stFrameInfo, 1000)

if ret == 0:

image = np.asarray(self.pData).reshape((self.stFrameInfo.nHeight, self.stFrameInfo.nWidth, 3))

if width is not None:

image = cv2.resize(image, (width, int(self.stFrameInfo.nHeight * width / self.stFrameInfo.nWidth)))

pass

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

return image

else:

return None

def show_runtime_info(self, image):

exp_time = self.get_exposure_time()

cv2.putText(image, ("exposure time = %1.1fms" % (exp_time * 0.001)), (20, 50), cv2.FONT_HERSHEY_SIMPLEX, 0.5, 255, 1)

if __name__ == '__main__':

camera = HKCamera()

try:

cnt = 0

while True:

image = camera.get_image(width=1920)

if image is not None:

camera.show_runtime_info(image)

cv2.imshow("", image)

key = cv2.waitKey(50) & 0xFF

if key == ord('e') or key == ord('E'):

cv2.destroyAllWindows()

break

if key == ord('t') or key == ord('T'):

cv2.imwrite("./data/" + str(cnt) + ".bmp", image)

cnt += 1

except Exception as e:

print(e)

附录2

- 放置在

src/livox_camera_lidar_calibration/scripts/mvs_joint_calibration_x64.py,代码会创建文件夹src/livox_camera_lidar_calibration/data/photo以保存用于联合标定的照片。

# 此脚本拍摄的照片用于相机雷达联合标定

import sys

from ctypes import *

import os

import numpy as np

import time

#from Camera import Camera

#from FTPService import FTPService

#from GPIO_set import GPIO_set

import cv2

#import configparser

sys.path.append("/opt/MVS/Samples/64/Python/MvImport") #打开MVS中的MvImport文件,对于不同系统打开的文件路径跟随实际文件路径变化即可

from MvCameraControl_class import * #调用了MvCameraControl_class.py文件

# 创建文件夹

def mkdir(path):

folder = os.path.exists(path)

if not folder:

os.makedirs(path)

print(f'-- new folder "{path}" --')

else:

print(f'-- the folder "{path}" is already here --')

class HKCamera():

def __init__(self, CameraIdx=0, log_path=None):

# enumerate all the camera devices

deviceList = self.enum_devices()

# generate a camera instance

self.camera = self.open_camera(deviceList, CameraIdx, log_path)

self.start_camera()

def __del__(self):

if self.camera is None:

return

# 停止取流

ret = self.camera.MV_CC_StopGrabbing()

if ret != 0:

raise Exception("stop grabbing fail! ret[0x%x]" % ret)

# 关闭设备

ret = self.camera.MV_CC_CloseDevice()

if ret != 0:

raise Exception("close deivce fail! ret[0x%x]" % ret)

# 销毁句柄

ret = self.camera.MV_CC_DestroyHandle()

if ret != 0:

raise Exception("destroy handle fail! ret[0x%x]" % ret)

@staticmethod

def enum_devices(device=0, device_way=False):

"""

device = 0 枚举网口、USB口、未知设备、cameralink 设备

device = 1 枚举GenTL设备

"""

if device_way == False:

if device == 0:

cameraType = MV_GIGE_DEVICE | MV_USB_DEVICE | MV_UNKNOW_DEVICE | MV_1394_DEVICE | MV_CAMERALINK_DEVICE

deviceList = MV_CC_DEVICE_INFO_LIST()

# 枚举设备

ret = MvCamera.MV_CC_EnumDevices(cameraType, deviceList)

if ret != 0:

raise Exception("enum devices fail! ret[0x%x]" % ret)

return deviceList

else:

pass

elif device_way == True:

pass

def open_camera(self, deviceList, CameraIdx, log_path):

# generate a camera instance

camera = MvCamera()

# 选择设备并创建句柄

stDeviceList = cast(deviceList.pDeviceInfo[CameraIdx], POINTER(MV_CC_DEVICE_INFO)).contents

if log_path is not None:

ret = self.camera.MV_CC_SetSDKLogPath(log_path)

if ret != 0:

raise Exception("set Log path fail! ret[0x%x]" % ret)

# 创建句柄,生成日志

ret = camera.MV_CC_CreateHandle(stDeviceList)

if ret != 0:

raise Exception("create handle fail! ret[0x%x]" % ret)

else:

# 创建句柄,不生成日志

ret = camera.MV_CC_CreateHandleWithoutLog(stDeviceList)

if ret != 0:

raise Exception("create handle fail! ret[0x%x]" % ret)

# 打开相机

ret = camera.MV_CC_OpenDevice(MV_ACCESS_Exclusive, 0)

if ret != 0:

raise Exception("open device fail! ret[0x%x]" % ret)

return camera

def start_camera(self):

stParam = MVCC_INTVALUE()

memset(byref(stParam), 0, sizeof(MVCC_INTVALUE))

ret = self.camera.MV_CC_GetIntValue("PayloadSize", stParam)

if ret != 0:

raise Exception("get payload size fail! ret[0x%x]" % ret)

self.nDataSize = stParam.nCurValue

self.pData = (c_ubyte * self.nDataSize)()

self.stFrameInfo = MV_FRAME_OUT_INFO_EX()

memset(byref(self.stFrameInfo), 0, sizeof(self.stFrameInfo))

self.camera.MV_CC_StartGrabbing()

def get_Value(self, param_type, node_name):

"""

:param cam: 相机实例

:param_type: 获取节点值得类型

:param node_name: 节点名 可选 int 、float 、enum 、bool 、string 型节点

:return: 节点值

"""

if param_type == "int_value":

stParam = MVCC_INTVALUE_EX()

memset(byref(stParam), 0, sizeof(MVCC_INTVALUE_EX))

ret = self.camera.MV_CC_GetIntValueEx(node_name, stParam)

if ret != 0:

raise Exception("获取 int 型数据 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

return stParam.nCurValue

elif param_type == "float_value":

stFloatValue = MVCC_FLOATVALUE()

memset(byref(stFloatValue), 0, sizeof(MVCC_FLOATVALUE))

ret = self.camera.MV_CC_GetFloatValue(node_name, stFloatValue)

if ret != 0:

raise Exception("获取 float 型数据 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

return stFloatValue.fCurValue

elif param_type == "enum_value":

stEnumValue = MVCC_ENUMVALUE()

memset(byref(stEnumValue), 0, sizeof(MVCC_ENUMVALUE))

ret = self.camera.MV_CC_GetEnumValue(node_name, stEnumValue)

if ret != 0:

raise Exception("获取 enum 型数据 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

return stEnumValue.nCurValue

elif param_type == "bool_value":

stBool = c_bool(False)

ret = self.camera.MV_CC_GetBoolValue(node_name, stBool)

if ret != 0:

raise Exception("获取 bool 型数据 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

return stBool.value

elif param_type == "string_value":

stStringValue = MVCC_STRINGVALUE()

memset(byref(stStringValue), 0, sizeof(MVCC_STRINGVALUE))

ret = self.camera.MV_CC_GetStringValue(node_name, stStringValue)

if ret != 0:

raise Exception("获取 string 型数据 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

return stStringValue.chCurValue

else:

return None

def set_Value(self, param_type, node_name, node_value):

"""

:param cam: 相机实例

:param param_type: 需要设置的节点值得类型

int:

float:

enum: 参考于客户端中该选项的 Enum Entry Value 值即可

bool: 对应 0 为关,1 为开

string: 输入值为数字或者英文字符,不能为汉字

:param node_name: 需要设置的节点名

:param node_value: 设置给节点的值

:return:

"""

if param_type == "int_value":

ret = self.camera.MV_CC_SetIntValueEx(node_name, int(node_value))

if ret != 0:

raise Exception("设置 int 型数据节点 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

elif param_type == "float_value":

ret = self.camera.MV_CC_SetFloatValue(node_name, float(node_value))

if ret != 0:

raise Exception("设置 float 型数据节点 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

elif param_type == "enum_value":

ret = self.camera.MV_CC_SetEnumValue(node_name, node_value)

if ret != 0:

raise Exception("设置 enum 型数据节点 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

elif param_type == "bool_value":

ret = self.camera.MV_CC_SetBoolValue(node_name, node_value)

if ret != 0:

raise Exception("设置 bool 型数据节点 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

elif param_type == "string_value":

ret = self.camera.MV_CC_SetStringValue(node_name, str(node_value))

if ret != 0:

raise Exception("设置 string 型数据节点 %s 失败 ! 报错码 ret[0x%x]" % (node_name, ret))

def set_exposure_time(self, exp_time):

self.set_Value(param_type="float_value", node_name="ExposureTime", node_value=exp_time)

def get_exposure_time(self):

return self.get_Value(param_type="float_value", node_name="ExposureTime")

def get_image(self, width=None):

"""

:param cam: 相机实例

:active_way:主动取流方式的不同方法 分别是(getImagebuffer)(getoneframetimeout)

:return:

"""

ret = self.camera.MV_CC_GetOneFrameTimeout(self.pData, self.nDataSize, self.stFrameInfo, 1000)

if ret == 0:

image = np.asarray(self.pData).reshape((self.stFrameInfo.nHeight, self.stFrameInfo.nWidth, 3))

if width is not None:

image = cv2.resize(image, (width, int(self.stFrameInfo.nHeight * width / self.stFrameInfo.nWidth)))

pass

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

return image

else:

return None

def show_runtime_info(self, image):

exp_time = self.get_exposure_time()

cv2.putText(image, ("exposure time = %1.1fms" % (exp_time * 0.001)), (20, 50), cv2.FONT_HERSHEY_SIMPLEX, 0.5, 255, 1)

if __name__ == '__main__':

camera = HKCamera()

photo_folder = "../data/photo"

mkdir(photo_folder)

try:

cnt = 0

while True:

image = camera.get_image(width=1920)

if image is not None:

camera.show_runtime_info(image)

cv2.imshow("", image)

key = cv2.waitKey(50) & 0xFF

if key == ord('e') or key == ord('E'):

cv2.destroyAllWindows()

break

if key == ord('t') or key == ord('T'):

cv2.imwrite(photo_folder + "/" + str(cnt) + ".bmp", image)

cnt += 1

except Exception as e:

print(e)

附录3

src/livox_camera_lidar_calibration/launch/cameraCalib_new.launch

<?xml version="1.0" encoding="UTF-8"?>

<launch>

<param name="camera_in_path" value="$(find camera_lidar_calibration)/data/camera/in.txt" /> <!-- the file to contain all the photos -->

<param name="camera_folder_path" value="$(find camera_lidar_calibration)/data/camera/photos/" /> <!-- the file to contain all the photos -->

<param name="result_path" value="$(find camera_lidar_calibration)/data/camera/result.txt" /> <!-- the file to save the intrinsic data -->

<param name="intrinsic_path" value="$(find camera_lidar_calibration)/data/parameters/intrinsic.txt" />

<param name="row_number" type="int" value="9" /> <!-- the number of block on the row -->

<param name="col_number" type="int" value="6" /> <!-- the number of block on the column -->

<param name="width" type="int" value="25" /> <!-- the width of each block on the chessboard(mm) -->

<param name="height" type="int" value="25" /> <!-- the height of each block on the chessboard(mm)-->

<node pkg="camera_lidar_calibration" name="cameraCalib_new" type="cameraCalib_new" output="screen"></node>

</launch>

src/livox_camera_lidar_calibration/src/cameraCalib_new.cpp

// 由于原来的代码保存的标定文件还需要手动修改格式写入到另一个文件中才能进行联合标定

// 这里我对代码进行修改以适应联合标定的参数文件

#include <iostream>

#include <sstream>

#include <time.h>

#include <stdio.h>

#include <fstream>

#include <ros/ros.h>

#include <opencv2/core/core.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#include <opencv2/calib3d/calib3d.hpp>

#include <opencv2/highgui/highgui.hpp>

using namespace cv;

using namespace std;

string camera_in_path, camera_folder_path, result_path, intrinsic_path;

int row_number, col_number, width, height;

void getParameters() {

cout << "Get the parameters from the launch file" << endl;

if (!ros::param::get("camera_in_path", camera_in_path)) {

cout << "Can not get the value of camera_in_path" << endl;

exit(1);

}

if (!ros::param::get("camera_folder_path", camera_folder_path)) {

cout << "Can not get the value of camera_folder_path" << endl;

exit(1);

}

if (!ros::param::get("result_path", result_path)) {

cout << "Can not get the value of result_path" << endl;

exit(1);

}

if (!ros::param::get("intrinsic_path", intrinsic_path)) {

cout << "Can not get the value of intrinsic_path" << endl;

exit(1);

}

if (!ros::param::get("row_number", row_number)) {

cout << "Can not get the value of row_number" << endl;

exit(1);

}

if (!ros::param::get("col_number", col_number)) {

cout << "Can not get the value of col_number" << endl;

exit(1);

}

if (!ros::param::get("width", width)) {

cout << "Can not get the value of width" << endl;

exit(1);

}

if (!ros::param::get("height", height)) {

cout << "Can not get the value of height" << endl;

exit(1);

}

}

int main(int argc, char **argv) {

ros::init(argc, argv, "cameraCalib");

getParameters();

ifstream fin(camera_in_path); /* 标定所用图像文件的路径 */

ofstream fout(result_path); /* 保存标定结果的文件 */

// 读取每一幅图像,从中提取出角点,然后对角点进行亚像素精确化

int image_count = 0; /* 图像数量 */

Size image_size; /* 图像的尺寸 */

Size board_size = Size(row_number, col_number); /* 标定板上每行、列的角点数 */

vector<Point2f> image_points_buf; /* 缓存每幅图像上检测到的角点 */

vector<vector<Point2f>> image_points_seq; /* 保存检测到的所有角点 */

string filename; // 图片名

vector<string> filenames;

while (getline(fin, filename) && filename.size() > 1) {

++image_count;

filename = camera_folder_path + filename;

cout << filename << endl;

Mat imageInput = imread(filename);

if (imageInput.empty()) { // use the file name to search the photo

break;

}

filenames.push_back(filename);

// 读入第一张图片时获取图片大小

if (image_count == 1) {

image_size.width = imageInput.cols;

image_size.height = imageInput.rows;

}

/* 提取角点 */

if (0 == findChessboardCorners(imageInput, board_size, image_points_buf)) {

//cout << "can not find chessboard corners!\n"; // 找不到角点

cout << "**" << filename << "** can not find chessboard corners!\n";

exit(1);

}

else {

Mat view_gray;

cvtColor(imageInput, view_gray, cv::COLOR_RGB2GRAY); // 转灰度图

/* 亚像素精确化 */

// image_points_buf 初始的角点坐标向量,同时作为亚像素坐标位置的输出

// Size(5,5) 搜索窗口大小

// (-1,-1)表示没有死区

// TermCriteria 角点的迭代过程的终止条件, 可以为迭代次数和角点精度两者的组合

cornerSubPix(view_gray, image_points_buf, Size(5, 5), Size(-1, -1), TermCriteria(cv::TermCriteria::EPS + cv::TermCriteria::MAX_ITER, 30, 0.1));

image_points_seq.push_back(image_points_buf); // 保存亚像素角点

/* 在图像上显示角点位置 */

drawChessboardCorners(view_gray, board_size, image_points_buf, false); // 用于在图片中标记角点

imshow("Camera Calibration", view_gray); // 显示图片

waitKey(1000); //暂停1S

}

}

// int CornerNum = board_size.width * board_size.height; // 每张图片上总的角点数

//-------------以下是摄像机标定------------------

/*棋盘三维信息*/

Size square_size = Size(width, height); /* 实际测量得到的标定板上每个棋盘格的大小 */

vector<vector<Point3f>> object_points; /* 保存标定板上角点的三维坐标 */

/*内外参数*/

Mat cameraMatrix = Mat(3, 3, CV_32FC1, Scalar::all(0)); /* 摄像机内参数矩阵 */

vector<int> point_counts; // 每幅图像中角点的数量

Mat distCoeffs = Mat(1, 5, CV_32FC1, Scalar::all(0)); /* 摄像机的5个畸变系数:k1,k2,p1,p2,k3 */

vector<Mat> tvecsMat; /* 每幅图像的旋转向量 */

vector<Mat> rvecsMat; /* 每幅图像的平移向量 */

/* 初始化标定板上角点的三维坐标 */

int i, j, t;

for (t = 0; t<image_count; t++) {

vector<Point3f> tempPointSet;

for (i = 0; i<board_size.height; i++) {

for (j = 0; j<board_size.width; j++) {

Point3f realPoint;

/* 假设标定板放在世界坐标系中z=0的平面上 */

realPoint.x = i * square_size.width;

realPoint.y = j * square_size.height;

realPoint.z = 0;

tempPointSet.push_back(realPoint);

}

}

object_points.push_back(tempPointSet);

}

/* 初始化每幅图像中的角点数量,假定每幅图像中都可以看到完整的标定板 */

for (i = 0; i<image_count; i++) {

point_counts.push_back(board_size.width * board_size.height);

}

/* 开始标定 */

// object_points 世界坐标系中的角点的三维坐标

// image_points_seq 每一个内角点对应的图像坐标点

// image_size 图像的像素尺寸大小

// cameraMatrix 输出,内参矩阵

// distCoeffs 输出,畸变系数

// rvecsMat 输出,旋转向量

// tvecsMat 输出,位移向量

// 0 标定时所采用的算法

calibrateCamera(object_points, image_points_seq, image_size, cameraMatrix, distCoeffs, rvecsMat, tvecsMat, 0);

//------------------------标定完成------------------------------------

// -------------------对标定结果进行评价------------------------------

double total_err = 0.0; /* 所有图像的平均误差的总和 */

double err = 0.0; /* 每幅图像的平均误差 */

vector<Point2f> image_points2; /* 保存重新计算得到的投影点 */

fout << "Average error: \n";

for (i = 0; i<image_count; i++) {

vector<Point3f> tempPointSet = object_points[i];

/* 通过得到的摄像机内外参数,对空间的三维点进行重新投影计算,得到新的投影点 */

projectPoints(tempPointSet, rvecsMat[i], tvecsMat[i], cameraMatrix, distCoeffs, image_points2);

/* 计算新的投影点和旧的投影点之间的误差*/

vector<Point2f> tempImagePoint = image_points_seq[i];

Mat tempImagePointMat = Mat(1, tempImagePoint.size(), CV_32FC2);

Mat image_points2Mat = Mat(1, image_points2.size(), CV_32FC2);

for (unsigned int j = 0; j < tempImagePoint.size(); j++) {

image_points2Mat.at<Vec2f>(0, j) = Vec2f(image_points2[j].x, image_points2[j].y);

tempImagePointMat.at<Vec2f>(0, j) = Vec2f(tempImagePoint[j].x, tempImagePoint[j].y);

}

err = norm(image_points2Mat, tempImagePointMat, NORM_L2);

total_err += err /= point_counts[i];

fout << "The error of picture " << i + 1 << " is " << err << " pixel" << endl;

}

fout << "Overall average error is: " << total_err / image_count << " pixel" << endl << endl;

//-------------------------评价完成---------------------------------------------

//-----------------------保存定标结果-------------------------------------------

Mat rotation_matrix = Mat(3, 3, CV_32FC1, Scalar::all(0)); /* 保存每幅图像的旋转矩阵 */

fout << "Intrinsic: " << endl;

fout << cameraMatrix << endl << endl;

fout << "Distortion parameters: " << endl;

fout << distCoeffs << endl << endl << endl;

cout << "Get result!" << endl;

fin.close();

fout.close();

ofstream fout_new(intrinsic_path);

fout_new << "intrinsic" << endl;

for (int i = 0; i < 3; ++i) {

fout_new << cameraMatrix.at<double>(i,0) << " "

<< cameraMatrix.at<double>(i,1) << " "

<< cameraMatrix.at<double>(i,2) << endl;

}

fout_new << endl;

fout_new << "distortion" << endl;

for (int i = 0; i < 4; ++i) {

fout_new << distCoeffs.at<double>(0,i) << " ";

}

fout_new << "0" << endl;

fout_new.close();

return 0;

}

附录4

src/livox_camera_lidar_calibration/launch/cornerMultiPhotosChoose.launch

<?xml version="1.0" encoding="UTF-8"?>

<launch>

<param name="intrinsic_path" value="$(find camera_lidar_calibration)/data/parameters/intrinsic.txt" /> <!-- intrinsic file -->

<param name="input_photos_path" value="$(find camera_lidar_calibration)/data/photo/" /> <!-- photo to find the corner -->

<param name="ouput_path" value="$(find camera_lidar_calibration)/data/corner_photo.txt" /> <!-- file to save the photo corner -->

<node pkg="camera_lidar_calibration" name="cornerMultiPhotosChoose" type="cornerMultiPhotosChoose" output="screen"></node>

</launch>

- 这里我将官方的脚本改写了,从每次只读取一张图片标记角点,改为读取文件夹下所有图片进行标记

// 从记录标定板四个角点并手动输入像素坐标改为使用鼠标在窗口中选点。

// 将launch制定的文件夹下所有的图片的角点进行获取

#include <ros/ros.h>

#include <image_transport/image_transport.h>

#include <opencv2/highgui/highgui.hpp>

#include <cv_bridge/cv_bridge.h>

#include <stdio.h>

#include <opencv2/imgproc/imgproc.hpp>

#include <iostream>

#include <fstream>

#include <opencv2/opencv.hpp>

#include <string>

// #include <filesystem>

#include <experimental/filesystem>

#include "common.h"

namespace fs = std::experimental::filesystem;

using namespace std;

void writeData(const string filename, const float x, const float y, uint mode);

cv::RNG random_number_generator;

string photos_path, output_name, intrinsic_path;

vector<cv::Point2f> corners_global; // 存储用户选取的四个角点

void writeData(const string filename, const string img_path, const float x, const float y, uint mode) {

ofstream outfile(filename.c_str(), ios_base::app);

if (!outfile) {

cout << "Can not open the file: " << filename << endl;

exit(0);

}

switch(mode) {

case(0):

outfile << img_path << endl;

outfile << "1" << endl;

break;

case(1):

outfile << "2" << endl;

break;

case(2):

outfile << "3" << endl;

break;

case(3):

outfile << "4" << endl;

break;

default:

cout << "[writeData] - Code error, unknown mode" << endl;

exit(0);

}

outfile << float2str(x) << " " << float2str(y) << endl;

}

void getParameters() {

cout << "Get the parameters from the launch file" << endl;

if (!ros::param::get("input_photos_path", photos_path)) {

cout << "Can not get the value of input_photo_path" << endl;

exit(1);

}

if (!ros::param::get("ouput_path", output_name)) {

cout << "Can not get the value of ouput_path" << endl;

exit(1);

}

if (!ros::param::get("intrinsic_path", intrinsic_path)) {

cout << "Can not get the value of intrinsic_path" << endl;

exit(1);

}

}

// 自定义鼠标回调函数

void onMouse(int event, int x, int y, int flags, void* param)

{

// 获取图像指针

cv::Mat* img = (cv::Mat*)param;

// 如果是左键单击

if (event == cv::EVENT_LBUTTONDOWN)

{

// 将所选点存入全局变量中

cv::Point2f p;

p.x = x;

p.y = y;

corners_global.push_back(p);

// 在图像上绘制一个红色圆点

cv::circle(*img, cv::Point(x,y), 3, cv::Scalar(0,0,255), -1);

// 在图像上显示坐标值

cv::putText(*img, cv::format("(%d,%d)",x,y), cv::Point(x+5,y-5), cv::FONT_HERSHEY_SIMPLEX, 0.5, cv::Scalar(0,0,255));

// 刷新窗口

cv::imshow("source", *img);

}

}

int main(int argc, char **argv) {

// 1. 初始化ROS节点和获取参数:

ros::init(argc, argv, "cornerPhoto");

getParameters();

// 遍历文件夹中的所有文件

for (const auto &entry : fs::directory_iterator(photos_path))

{

cout << entry.path() << endl;

// 检查文件是否为图片类型(这里只读取bmp格式)

if (entry.path().extension() == ".bmp")

{

// 2. 读入图片和畸变矫正:

cv::Mat gray_img, src_img;

src_img = cv::imread(entry.path().string());

// 检查图片是否成功读入

if (!src_img.empty())

{

// TODO: 在这里对读入的图片进行处理

vector<float> intrinsic;

getIntrinsic(intrinsic_path, intrinsic);

vector<float> distortion;

getDistortion(intrinsic_path, distortion);

// set intrinsic parameters of the camera

cv::Mat cameraMatrix = cv::Mat::eye(3, 3, CV_64F);

cameraMatrix.at<double>(0, 0) = intrinsic[0];

cameraMatrix.at<double>(0, 2) = intrinsic[2];

cameraMatrix.at<double>(1, 1) = intrinsic[4];

cameraMatrix.at<double>(1, 2) = intrinsic[5];

// set radial distortion and tangential distortion

cv::Mat distCoeffs = cv::Mat::zeros(5, 1, CV_64F);

distCoeffs.at<double>(0, 0) = distortion[0];

distCoeffs.at<double>(1, 0) = distortion[1];

distCoeffs.at<double>(2, 0) = distortion[2];

distCoeffs.at<double>(3, 0) = distortion[3];

distCoeffs.at<double>(4, 0) = distortion[4];

cv::Mat view, rview, map1, map2;

cv::Size imageSize = src_img.size();

cv::initUndistortRectifyMap(cameraMatrix, distCoeffs, cv::Mat(),cv::getOptimalNewCameraMatrix(cameraMatrix, distCoeffs, imageSize, 1, imageSize, 0), imageSize, CV_16SC2, map1, map2);

cv::remap(src_img, src_img, map1, map2, cv::INTER_LINEAR); // correct the distortion

// 3. 显示图片和获取用户输入的角点坐标::

cout << "Please choose the four corners" << endl;

cv::namedWindow("source");

cv::imshow("source", src_img);

// 设置鼠标回调函数,将图像指针作为参数传递给自定义函数

cv::Mat temp_img = src_img.clone();

cv::setMouseCallback("source", onMouse, &temp_img);

cv::waitKey(0);

cv::destroyWindow("source");

if (!corners_global.size()) {

cout << "No input corners, end process" << endl;

return 0;

}

// 4. 利用角点进行亚像素精确定位:

cv::Size winSize = cv::Size(5, 5);

cv::Size zerozone = cv::Size(-1, -1);

cv::TermCriteria criteria = cv::TermCriteria(cv::TermCriteria::EPS + cv::TermCriteria::MAX_ITER, 40, 0.001);

// cv::namedWindow("output");

cv::cvtColor(src_img, gray_img, cv::COLOR_BGR2GRAY);

cv::cornerSubPix(gray_img, corners_global, winSize, zerozone, criteria);

cv::Mat result_img = src_img.clone();

for(uint t = 0; t < corners_global.size(); ++t) {

cv::circle(result_img, corners_global[t], 3, cv::Scalar(random_number_generator.uniform(0, 255), random_number_generator.uniform(0, 255), random_number_generator.uniform(0, 255)), 1, 8, 0);

printf("(%.3f %.3f)", corners_global[t].x, corners_global[t].y);

writeData(output_name, entry.path().string(), corners_global[t].x, corners_global[t].y, t);

}

cout << endl << "Result saved, tap a random key to finish the process" << endl;

cv::namedWindow("output");

imshow("output", result_img);

cv::waitKey(0);

cv::destroyWindow("output");

corners_global.clear(); // 及时清空全局变量中的元素,否则后续不断加入新元素,代码会报错

}

else

{

std::cerr << "Error reading image: " << entry.path().string() << std::endl;

}

}

}

return 0;

}

评论(2)

您还未登录,请登录后发表或查看评论